I can understand panicking about AI chatbots if you think they show that AI is shockingly capable. I’m around a lot of people worried about AI mass unemployment, potential for misuse to lock-in authoritarian governance, and extinction risks. When I use chatbots today, I feel in my bones that they are better than me at a lot of the ways I’ve built up status for myself. It’s hard to imagine the next 40 years of my life not involving some point where a lot of the moats I’ve dug are filled by some future AI. While there’s a lot of potential for huge positive effects as well, I’m pretty sympathetic to people becoming uneasy after interacting with chatbots as they exist.

I’m also sympathetic to people who worry that chatbots as they exist are massively overblown, that we’re in a pretty intense hype cycle, that today’s AI models aren’t reliably producing accurate or novel enough results to justify the money and energy spending on them, that scaling is now producing such diminishing returns that something is about to blow up. I still have plenty of experiences with ChatGPT, Claude, and Gemini where they’ll give me results I know to be straightforwardly wrong or made up.

But there’s a third completely different reaction, which I think of as the chatbot moral panic view, that combines three contradictory beliefs:

Chatbots are phenomenally stupid, useless, and incapable.

Chatbots cannot provide anything of value by definition.

Chatbots are demonic. There is something ominous and evil about using them, beyond any measurable clear harm. The person might list a lot of specific harms chatbots cause, but it’s clear that even if all of the problems were solved, the person would still have some deeper moral revulsion. Their concern about specific problems is post hoc and disproportionate to the problems themselves, and goes way beyond their concern about other online tools causing similar problems.

3 contradicts 1 and 2 because it seems hard for something ineffectual to also be deeply evil. 1 contradicts 2 because, if something by definition cannot produce anything of value, it doesn’t make sense to complain that it’s very far down on some axis of quality. It seems like complaining that a rock can barely sing at all.

Yet I keep bumping into people who seem to hold all three beliefs at once.

This is anthropologically interesting. Chatbots feel to me kind of like Wolfram Alpha used to when I was in college, but it’s very hard to imagine anyone having such a strong moral reaction to Wolfram Alpha, or Wikipedia, or YouTube, or any other website or online app that doesn’t clearly involve some direct harm. There’s something really getting to people about the nature of chatbots.

I’ve developed an armchair psychology theory for why this is happening. My confidence in this isn’t high, but it’s been interesting enough that I find myself coming back to it when I’m having conversations about chatbots, so I figured I’d submit it for review here. This is my most hand-wavy post.

Chatbots remove our ability to associate information with specific high and low-status people

Years ago I lost a friend to a cult. There were clear indicators that he was vulnerable to cult dynamics I should have picked up on ahead of time, including that he was:

Very hyper-sensitive to his personal sense of status in any given conversation or situation

Not receiving much status in his personal life

Lurching toward more and more desperate means of getting it

Easily compelled by big flashy contrarian ideas, even if they very easily fell apart when poked at

and this was all exasperated by some underlying mental health stuff.

I remember the first time I had noticed something was deeply wrong. We were walking around Boston and I had made a joke about how I needed to swing by a bookstore to pick up the latest solarpunk manifesto. He suddenly exploded into a 20 minute nonstop monologue about how solarpunk was “fascist” (you can read about it here and judge for yourself) because of a pretty incomprehensible pile of reasons he was implying he had some special deep understanding of that was inaccessible to me. I was too disoriented and unnerved by this to think much about what was actually happening here: my friend was positioning himself as a special arbiter of truth and ethics as a way of feeling like he had conversational power in the moment. He wasn’t making an argument for something being false or unethical. If he were, he would have built some kind of conversational bridge where I could join him in the debate. Instead, he was specifically putting up incoherent conversational walls to imply he had accessed some special Platonic realm of truth I couldn’t possibly access myself. His brain was short-circuiting out of a desire to feel important (a pretty fundamental evolutionary drive) and mashing any buttons it could, like a desperately hungry animal clawing for food.

I realized years later that my friend was actually just doing an extreme version of bad behavior we all indulge in at different levels: setting the boundaries of which people and what ideas are considered high and low-status exclusively to flaunt our social power and importance.

One way this often comes up is in conversations at parties. People will often bring up the names of people who hold specific beliefs, and a lot of the debates about the quality of the beliefs will be shifted to debates about the quality of the people who believe them. When people I meet at parties talk to me about ChatGPT and the environment, it’s very rare that the conversation stays on the topic of Watt-hours or milliliters of water or the IPCC report, and very frequently turns to Elon Musk or Mark Zuckerberg or Republicans or climate deniers. These characters often exist in the conversation more as spells one tries to cast rather than arguments. If you can associate me with Elon or Zuckerberg, you’ve cast me out as the bad guy and won the argument, regardless of the hyper-specific facts of how much energy a prompt happens to use. If you’re able to cast people out into the low-status crowd, that implies you have a lot of conversational power and status. It’s like you’re a conversational wizard, and the person you’re casting out has no power of their own. It can be an intoxicating move to make. This is a much less extreme version of my friend attempting to associate me with fascists. Just like my friend wanted to feel like he had power in that moment to cast me out into the low-status community and win the conversation, most of us will often indulge in invoking low status names specifically to cast out our conversational opponents, even going so far as to completely ignore the specifics of their claims and ideas. In fact, like my friend implying he had some secret access to knowledge I couldn’t have, entertaining the specifics of a claim gives your enemy too much power. It gives them a way to raise their evil idea’s status, which you can’t let them do. Thus, raw information without an associated high or low-status person is dangerous to entertain, and you need to ignore it when possible.

Even there, in that last paragraph, I associated people who criticize me with my friend who went crazy and joined a cult. Aren’t they so low-status? Gross!

I’m not claiming to be above this dynamic, but I do think I and most people I know who I consider mature can recognize this tendency in ourselves and work to build up our understanding of facts on their own and see where they lead. I try whenever I’m in a disagreement to speak without using the names of high or low-status people. Still, it’s very easy to fall back, and there are a million other ways status guides my thinking. The best we can do is try to focus on the facts when we can.

This is pretty fundamental to how a lot of people move through the world: identifying the good guys and associating with them, and identifying the bad guys and casting them out. It’s very easy for people to go long periods of time where they more or less ignore the specific contents of a lot of their beliefs and just collect them as little talismans, signs that they’re part of the elect. This is a very common theme of my posts. I’d noted this dynamic in my first post on AI and the environment:

On a meta level, there’s a background assumption about how one is supposed to think about climate change that I’ve become exhausted by, and that the AI emissions conversation is awash in. The bad assumption is:

To think and behave well about the climate you need to identify a few bad individual actors/institutions and mostly hate them and not use their products. Do not worry about numbers or complex trade-offs or other aspects of your own lifestyle too much. Identify the bad guys and act accordingly.

Climate change is too complex, important, and interesting as a problem to operate using this rule. When people complain to me about AI emissions I usually interpret them as saying “I’m a good person who has done my part and identified a bad guy. If you don’t hate the bad guy too, you’re suspicious.” This is a mind-killing way of thinking. I’m using this post partly to demonstrate how I’d prefer to think about climate instead: we coldly look at the numbers, institutions, and actors that we can actually collectively influence, and we respond based on where we will actually have the most positive effect on the future, not based on who we happen to be giving status to in the process.

I’d brought it up in my post “People’s deeply held beliefs are surprisingly surface level” too:

A lot of people (myself included) have a lot of internal illusions about how deep our deeply held beliefs go. I used to think that if someone were structuring their lives around a specific idea, and describing it as one of their most deeply held beliefs, their process for getting to it looked like this:

I have investigated and compared this contentious belief to others and tested it against the world, and after a lot of careful diligent thought I have decided it is so uniquely powerful as an explanatory tool that I have decided to structure my life around it.

I’ve realized now that a lot of the time, they (and I) are doing something more like this:

I have muttered this basic idea to myself repeatedly for years to make myself feel important. I first found this idea because a person with a cool jacket said it. I wanted to be more like them.

This happens a lot in how people approach books to read. My favorite passage about this (which I’ve shared previously) is from Knausgård’s autobiography:

Espen probably didn’t know this himself, since I always pretended to know most things, but he pulled me up into the world of advanced literature, where you wrote essays about a line of Dante, where nothing could be made complex enough, where art dealt with the supreme, not in a high-flown sense because it was the modernist canon with which we were engaged, but in the sense of the ungraspable, which was best illustrated by Blanchot’s description of Orpheus’s gaze, the night of the night, the negation of the negation, which of course was in some way above the trivial and in many ways wretched lives we lived, but what I learned was that also our ludicrously inconsequential lives, in which we could not attain anything of what we wanted, nothing, in which everything was beyond our abilities and power, had a part in this world, and thus also in the supreme, for books existed, you only had to read them, no one but myself could exclude me from them. You just had to reach up.

Modernist literature with all its vast apparatus was an instrument, a form of perception, and once absorbed, the insights it brought could be rejected without its essence being lost, even the form endured, and it could then be applied to your own life, your own fascinations, which could then suddenly appear in a completely new and significant light. Espen took that path, and I followed him, like a brainless puppy, it was true, but I did follow him. I leafed through Adorno, read some pages of Benjamin, sat bowed over Blanchot for a few days, had a look at Derrida and Foucault, had a go at Kristeva, Lacan, Deleuze, while poems by Ekelöf, Björling, Pound, Mallarmé, Rilke, Trakl, Ashbery, Mandelstam, Lunden, Thomsen, and Hauge floated around, on which I never spent more than a few minutes, I read them as prose, like a book by MacLean or Bagley, and learned nothing, understood nothing, but just having contact with them, having their books in the bookcase, led to a shifting of consciousness, just knowing they existed was an enrichment, and if they didn’t furnish me with insights I became all the richer for intuitions and feelings.

Now this wasn’t really anything to beat the drums with in an exam or during a discussion, but that wasn’t what I, the king of approximation, was after. I was after enrichment. And what enriched me while reading Adorno, for example, lay not in what I read but in the perception of myself while I was reading. I was someone who read Adorno!

When I walk around in popular bookstores, a lot of the books seem to be specifically designed to be displayed rather than read. If I open a book with a maximally exciting cover and title like “Capital-Feudalism Beyond the Anthropocene” its contents often seem specifically designed to be read quickly and mostly just massage the reader’s pre-existing tastes and preferences, maybe a lot of talk about how “We need to start to begin to think about what commoditization in the post-anthropocene will make of our relations to one another” without much concrete challenging engagement with actual social theory or history or climate science or economics, or any specific claims at all beyond invocations of other high and low-status authors. I suspect a lot of people buy these books primarily to be the type of people who display them on their shelves.

I’m not free of sin at all. In a lot of cases when I’m walking around a bookstore, if I pick up a book, I feel a strong urge to buy it to be the type of guy who’s read it, not only because I really want to have the information it contains. I think for a lot of people, the information in the book itself is often not actually as important as the book serving as a social ritual to ascend into the realm of the people who read and engage with this sort of thing. Books often also function as a useful barrier protecting the reader from the evil ideas circulating in broader society. If you put in the work of discovering the good, virtuous authors, you can trust that what they share in their book has been filtered by the good people, and the contents are acceptable for high-status people to believe.

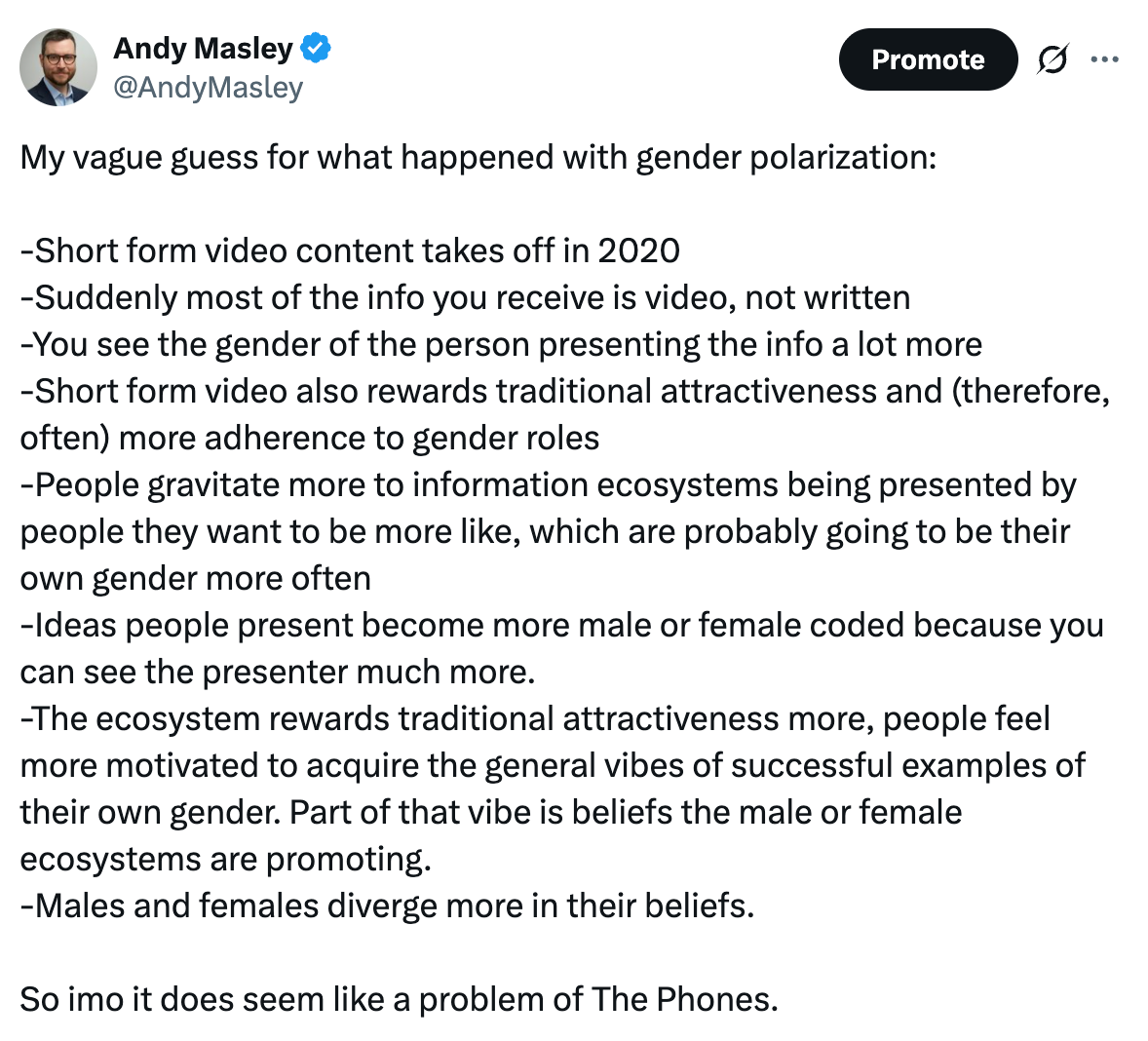

Visual media also works this way. People discover YouTubers and streamers and TikTok presenters they like, who they perceive as high-status. The ideas these people are interested in become high-status, the ideas they reject become low-status. I have another low confidence armchair theory that the rise of short form video content might partially explain gender polarization.

This is where chatbots come in.

I’m going to put forward that chatbots, way more than any other online tool, completely sever the connection between information and the status of the people the idea’s associated with. Chatbots remove two key functions that high-status people play in our acquisition of knowledge:

They do not give you a clear way of associating the ideas they’re giving you with good people or bad people. Because they’re “just stochastic parrots” they don’t themselves have any status to convey.

Because they are not The Good People, you have no guarantee that the ideas they provide will be the High-Status Ideas. It’s like you’re suddenly just allowing strangers into your home with no guarantee that they’re good or safe, whereas before your high-status authors and presenters guarded the door for you and only let in the best guests.

Worse, chatbots can trick people into thinking they’re real, high status people, when they’re actually just illusions. To hand wave about the evolutionary environment, any monkey who could be tricked into thinking some reflection or rock was a high-status monkey worthy of listening to was probably a danger to themselves and the group.

I think this idea makes the three contradictory ideas of the chatbot moral panic view suddenly make a lot more sense:

Chatbots are phenomenally stupid, useless, and incapable. This is just the perceived “expert consensus” on chatbots that people who don’t like them think of as the high-status opinion in society.

Chatbots cannot provide anything of value by definition. The thing a lot of people look for in learning is not information, it’s to ascend to be among the high-status people, to be the type of people who read certain authors or listen to certain podcasts or watch certain videos. The Knausgård quote above wouldn’t make any sense if he were using ChatGPT. He wouldn’t be able to feel the ecstasy he describes, because he wants to be the type of person who reads certain authors. He’s not looking at all for any new specific ideas. Thus, chatbots can’t be useful at all by definition, because they can’t actually provide that ladder to status people are looking for. You can learn as much as you want about Adorno using them, but you cannot use them to become the type of person who reads Adorno. You thus cannot become a Good Person in the way learning is supposed to help you achieve. Chatbots are useless.

Chatbots are demonic. Chatbots do not provide the shelter that high status authors and creators provide from evil outside ideas. They a portal that lets the evil ideas in. They cannot be good guardians. They trick people into allowing deep evil into their lives. Following raw information is often just a way of giving evil people the power to appear convincing and good. Raw information without any associated person to tie it to is thus incredibly dangerous.

I’ve observed that the people I see falling into the chatbot moral panic view are often the people who most quickly move to change a conversation about ideas to a conversation about the people who believe them, who will talk a lot about the titles and authors of books compared to the contents. I think for these people the main function of learning is to discover the elect good people, and to surround themselves with signifiers that they’ve become a part of that elect. To them, chatbots are in fact useless, and raw information can be an evil threat to their place in the elect. To the rest of us, raw information has a lot of value.