I regularly scroll by very popular posts where people confidently claim that AI as it exists cannot possibly “create new knowledge.” They usually bring up the fact that AI has been trained to imitate language and predict the next word based on imitation rather than actual thought, so it’s only regurgitating what already exists.

This talking point is crazy. It’s so obviously wrong that it’s disorienting to me whenever I see grown adults saying it. This is one of the pieces of common wisdom that’s congealed about AI that collapses when you poke at it for even a moment.

Knowledge is justified true belief.1 Can AI give me a justified true belief that no one else has had before? Yes, obviously. Google searches can also generate new justified true beliefs. It’s not actually difficult at all to generate new knowledge. It doesn’t require magic.

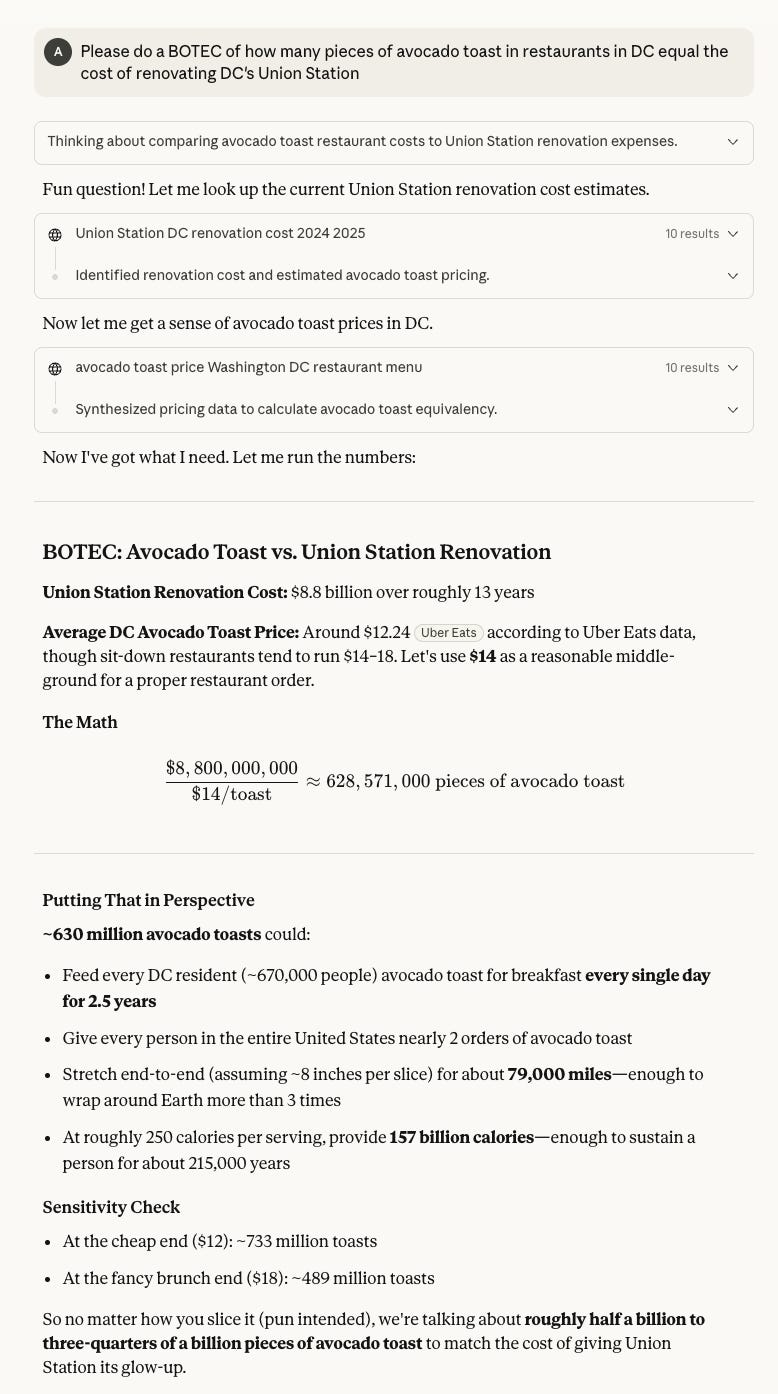

Here’s a very simple example: I asked Claude “Please do a BOTEC of how many pieces of avocado toast in restaurants in DC equal the cost of renovating DC’s Union Station”

This has given me a new justified true belief. It’s justified because it provides sources, and I can clearly see that there are good reasons to believe each of the premises that lead to the conclusion that the Union Station renovation costs roughly half a billion avocado toasts. It appears to be true. And it’s entered my mind as a belief I hold. And it’s new. I don’t think anyone’s held this specific justified true belief before. AI can create new knowledge. Why would anyone say otherwise?

This could have all been done with 2 Google searches: the cost of the Union Station renovation, and the cost of avocado toast. Doing a few Google searches can also generate new knowledge. It’s very very very easy to generate new knowledge! Why would AI models as they exist not also be able to do this?

I think that when people say this, they don’t actually mean new knowledge, they mean new concepts. AI has been trained on basically the full corpus of human text, rewarded for adhering to our conceptual universe, and punished for straying from it. It’s hard for me to imagine a deep learning model as they exist independently inventing the concept of a negative number if its training data had not ever included any implication that negative numbers exist.

So I probably agree with LLM skeptics that there’s a good chance they don’t scale to AGI, because the way they’re trained might mean that they’re fundamentally not able to generate radically new concepts for thinking about the world, which seems like a prerequisite for full human intelligence. They can merely approximate almost all currently existing human concepts that can be expressed in text, and combine wildly disparate information using those concepts to help us generate huge amounts of new knowledge. Still seems useful to me.

The definition of knowledge is philosophically controversial, but most rival definitions lend more credence to my view.