As summarized in Part 1, folk Cartesianism consists of three strong intuitions:

There is a unified self that floats above mental processes and observes them

We know our own internal experience with complete certainty

The basis of all knowledge lies in our first-person subjective experience

In this post I’ll give a lot of arguments and intuition pumps for why each of these is probably incorrect, or at least isn’t “obvious.” This post is very long. My goal is that at the end you have a grasp of many of the most important questions in philosophy of mind, why so many philosophers reject the popular everyday understanding of mind, and that you hopefully come away with a bag of new mental tools for thinking about why machines might be able to replicate what happens in human minds. I’m writing this partly to be the guide I wish I had when I was first learning about this.

“1. There is a unified self that floats above mental processes and observes them”

How can a Cartesian ego fit within our scientific theories?

Cartesian egos are strange. They seem to behave like magical floating eyeballs, peering into physical reality from outside. No other physical object does this. No matter how many grains of sand I pile up, there will never be “something it is like to be the sand.” The sand does not have an internal movie theater playing experiences for it to observe. Being sand feels identical to being dead. We would expect all physical objects to behave similarly, because all physical objects are ultimately just elementary particles that don’t have experiences, similar to the grains of sand. No physical object ever becomes an observer in an inner movie theater. So how do Cartesian egos fit within our understanding of science?

There are only two options: Cartesian egos are fundamentally physical, or they’re not. Both options have some weird implications that make them look implausible.

Cartesian egos are not fundamentally physical

If Cartesian egos are not fundamentally physical, they threaten to clash with physicalism, which says that the only things that have causal power over physical objects are other physical objects. If they have any kind of causal power, this breaks physicalism. We have two options:

Cartesian egos do not have causal power

Maybe Cartesian egos are silent observers, taking everything in but never actually having any causal effect on the world. They silently watch the movie of your life play out.

This has some very weird implications, to the point that it looks like it can’t be true.

First, if a Cartesian ego doesn’t have causal power, it cannot have any influence over the information in your brain that causes you to physically speak or write about the ego itself. Your brain could never acquire any information that it exists. Describing Cartesian egos should feel like describing “that feeling of gloofleglorf around your left ear at 3:52 PM every day. We all know that one.” Something that our brains have never received any information about existing at all.

But many Cartesians clearly don’t think this is how Cartesian egos work. Descartes himself talked as if nothing in the world could be more obvious than that he had an observing ego. “I think, therefore I am” is supposed to be the absolute obvious bedrock of all other reasoning. This implies that the Cartesian ego is giving the brain some kind of information that it exists, so it must be having some causal effect on the world. If it exists, it’s what caused Descartes to write that!

Another weird implication: if your Cartesian ego suddenly popped out of existence, and the “you” experiencing things no longer existed, literally nothing about your behavior would change, because the ego wasn’t affecting anything about your physical body. You would go on behaving exactly as you do, talking about your experiences and feelings as if they were really happening, but no one would be watching the internal theater. If you believe that you have an internal theater of experience, and that you are reacting to the experiences that happen in this theater, then you cannot accept the view that Cartesian egos both exist and do not have any causal power.

Cartesian egos have causal power

If Cartesian egos are both nonphysical and have causal power, they violate physicalism. This is possible. Maybe physicalism is wrong and nonphysical objects have effects on the world. But it’s very suspicious. Physics is this grand unified theory of the world that seems to predict everything from what happens inside particle colliders to distant stars to our ordinary lives, and does so with wild consistent success. Physics is our best attempt at a complete account of every entity in the universe that has causal power, and it’s shockingly effective at making accurate predictions, to the point that most physicists perform their investigations under the assumption that physics is causally closed, that nothing outside of physics can affect anything in the universe. Yet the only place where the theory is supposedly violated is in the brains of the species making this claim. This species has evolved with strong motivations for others to treat it as special and different from the rest of the world. From the outside, it looks suspicious when the one place where magic is claimed to happen is located in a species that has strong reasons to want other members of its own species to believe that it’s special.

It seems like there should be some way of observing laws of physics being violated in human brains if this is the case. If the Cartesian ego is some nonphysical entity sending information to and receiving information from the brain, there must be some creative way to measure where this information suddenly appears in the brain out of nowhere. I’m enough of a physicalist that I wouldn’t expect us to ever make this observation, even if we had nano bots observing every neuron in a human brain.

Some people talk about quantum mechanics leaving an opening in the universe for non-physical objects to affect the brain, but this seems wrong for two reasons:

Most of the important signals that happen in the brain are on a scale that’s too large for the uncertainty of quantum physics to have a significant effect.

For quantum physics to work, the probability distribution of what happens needs to follow mathematical laws. This doesn’t leave any room for causality to “flow” through the randomness. Causality means that if A happens, B follows. But quantum mechanics says that B cannot be more likely than the pre-determined spread of probabilities dictates. So quantum mechanics is actually a completely sealed barrier to forces outside the universe. Nothing could get through its requirement for complete but mathematically structured randomness to influence the physical world.

Cartesian egos are fundamentally physical

If Cartesian egos are actually just very complicated physical processes, they don’t behave like any other physical processes. They’re the only physical processes that are “awake” and can have subjective experience at all. Very weird, but possible! Importantly though, if they are fundamentally physical, they could also exist in machines, so AI might independently develop them.

But Cartesian egos also seem to violate most core properties that every other physical object has:

Spatiotemporal location: The don’t seem to have a clear location in the world. Maybe right behind your eyes? Definitely not something that can be pointed at and identified.

Causal structure and lawfulness: They don’t seem to follow any kind of regular laws. They’re not discoverable through observation and experiment. They’re not predictable. They can’t be expressed mathematically.

Detectability/measurability: They can’t be measured, either directly or through their causal effects. They don’t seem to leave empirical traces, except for humans after the 1600s saying they had them.

Public accessibility: No other person has any access to my Cartesian ego at all.

All 4 common properties of physical objects are violated by Cartesian egos. Maybe they’re the only objects in the universe that violate all of them, whereas every other object adheres to all of them. Seems unlikely. I would expect any and all entities we discover to eventually fit into the universal wavefunction, the unifying mathematical description of everything that exists. Cartesian egos can’t be written as a part of this function.

Cartesian egos are folk theories and do not actually exist

All the above examples make Cartesian egos seem super strange to me, to the point that it doesn’t seem obvious that they exist. It seems like what’s actually happening is that human minds are inventing folk theories of how they work.

Importantly, there are ways that large information processing systems could develop subsystems that work kind of like Cartesian egos. Global workspace theory posits that there are parts of the human brain that send information to a lot of subsystems at once, as if their contents are on a stage and the subsystems are observers in the crowd, who each run with the big central information to make inferences. This seems like a possible physical corollary for what we mean when we think about the Cartesian ego, but it’s notably just another physical function in the brain.

Shouldn’t this all be obvious?

Stepping back from this debate, Cartesians talk as if the fact that we are each a self watching our experience like a movie is so obvious that it’s unquestionable, but they often wildly disagree on the nature of this self. Does the self have causal power, or does it just silently watch? Does thought happen “within” the self, or does the self passively observe thoughts as well as experiences? If no one quality of the Cartesian self is obvious, maybe its existence isn’t so obvious either.

Conclusion

So it seems like actual Cartesian egos probably don’t exist. The mind is not a movie playing for an inner observer, watching it like a floating eye peering into physical reality from somewhere else. The Cartesian theater of the mind isn’t actually happening, at least not in some special nonphysical way. Any description of the difference between humans and AI that implicitly relies on a nonphysical Cartesian ego humans have that AI does not is mistaken.

What’s left when a Cartesian ego falls away? Different mental functions taking in information, moving them around the brain, giving and storing outputs, and using those outputs in other functions. If global workspace theory is true, there might be some part of the brain that broadcasts out information to many subsystems at once, approximating the role a Cartesian ego plays. But there is no nonphysical angel-like eyeball looking over the whole subjective field of the mind like an observer in a movie theater. The mental function global workspace theory describes could be replicated by a machine.

“2. We know our own internal experience with complete certainty”

The goal of this section is to argue against the Cartesian idea that we have special access to what’s happening in our own minds, our own first-person subjective experiences, in a way other systems like computers cannot have, because they don’t have these experiences.

There are two related claims I’ll argue against here:

We have 100% certain access to the contents of our own experience. I can be mistaken about whether something white and puffy in a field is an actual sheep or a fake sheep, but I cannot be mistaken that in that moment my subjective, first-person experience includes white puffiness framed by a green field.

We can introspect and clearly observe what’s happening in our own minds. AI systems can never introspect in the way people can because they’re machines. They just take an input, run it through computations, and produce an output. Humans can turn their inner eye back on this process itself. We don’t know how to make machines do this and we might never know.

In both cases, I’ll be arguing for the alternative view that both experience and introspection are useful but fallible natural processes that take in potentially incorrect information, run them through mental functions and computations, and return outputs the mind stores and uses in additional functions. I am not arguing that we don’t have experiences or introspection, only that these do not behave like mystical, 100% clear and reliable sources of information that could never be replicated by machines, and that our experience is not like watching a movie in an internal theater. At the end, you should feel like the idea of a movie theater is a bad analogy for your internal experience, because there’s a lot more ambiguity and uncertainty in the experiences you have than what’s displayed on a screen. This ambiguity leaves us unable to be too certain about the nature of our moment to moment experience.

The slipperiness of our experience

A friend recently asked me a funny question: “What do you think the shape of your visual field is?”

My visual field has been my main experience since birth. There should be basically nothing more obvious to me than what it’s shaped like. Every moment, I’m being flooded with visual experience. What is the shape of this flood? If the Cartesian theater is real, what is the shape of the movie screen? Which of these three screens have you been looking at all your life?

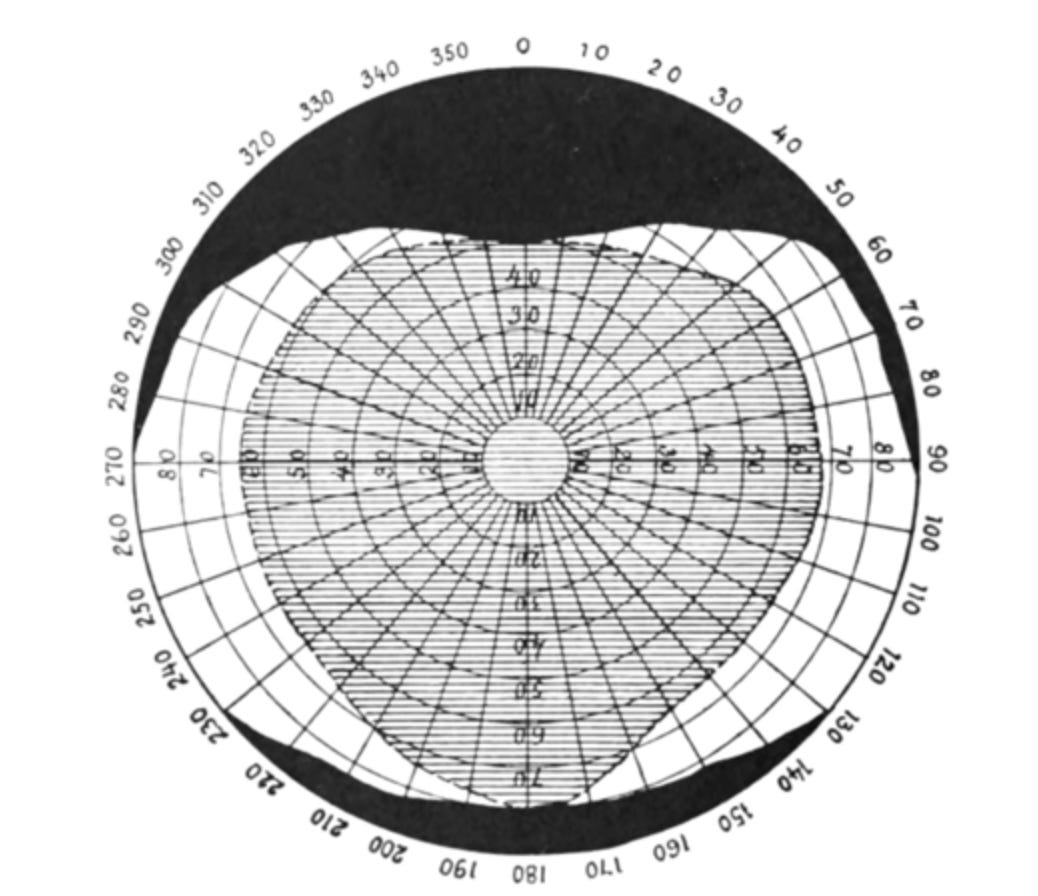

Turns out, this is the shape:

The shaded central area is seen by both eyes, the white area is seen by only one eye. Numbers represent degrees from straight ahead.

Does this shape look familiar to you? Given that you’ve been “looking at it” for your entire life, would you have been able to pick it out from a line of what the visual field looks like? Is this the shape of the screen in your internal movie theater?

This brings up one of the big central questions in philosophy of mind: qualia. From the Stanford Encyclopedia of Philosophy:

Feelings and experiences vary widely. For example, I run my fingers over sandpaper, smell a skunk, feel a sharp pain in my finger, seem to see bright purple, become extremely angry. In each of these cases, I am the subject of a mental state with a very distinctive subjective character. There is something it is like for me to undergo each state, some phenomenology that it has. Philosophers often use the term ‘qualia’ (singular ‘quale’) to refer to the introspectively accessible, phenomenal aspects of our mental lives. In this broad sense of the term, it is difficult to deny that there are qualia. Disagreement typically centers on which mental states have qualia, whether qualia are intrinsic qualities of their bearers, and how qualia relate to the physical world both inside and outside the head. The status of qualia is hotly debated in philosophy largely because it is central to a proper understanding of the nature of consciousness. Qualia are at the very heart of the mind-body problem.

If a computer could scan all the informational content in your brain, every last belief and memory and observation about the world, and get it all down in written words and stored information, people who believe in qualia would say that the computer would still not know what it was like to be you. Qualia are the contents of your first-person experience that cannot be summarized in pure information.

If the Cartesian ego is the person sitting watching the movie in our heads, qualia are the movie.

Unfortunately for me, qualia both:

Don’t make sense under physicalism (they violate all 4 properties of physical objects).

Are very very very hard for me to deny, in a way Cartesian egos are not.

I mean, look at this.

It’s hard to understand how any third-person purely informational account of the world could ever get across the first-person experience of seeing red. Simply reporting “A wavelength of 700 nanometers hit Andy’s eyes and activated memories and emotions associated with previous times this has happened” just doesn’t get across the first-person redness of the red. It’s like how all attempts to describe color to a lifelong blind person just obviously hinge on other experiences the blind person has also not had. We can’t get across the qualia using words.

I’m agnostic on whether qualia exist, or how they relate to the physical world. But even if qualia do exist, I’m pretty sure they’re very different than what our folk story says about them.

One of my favorite papers in philosophy, Quining Qualia, attempts to use lots of thought experiments to convince the reader that qualia don’t actually exist. The author (Dan Dennett) specifically defines qualia as having four key properties. They are:

Intrinsic: They’re basic and fundamental to the world and can’t be picked apart further. They’re also not “relational” in that they don’t depend on their relationships to other things. The quality of being “to the left of a bookshelf” is relational. It’s not an intrinsic property, because it could be changed by moving the object. But “the redness of the red” does seem intrinsic. No matter what colors we put adjacent to the red, or other things we compare it to, the redness of the red in my first-person experience will still be unaffected.

Ineffable: Not describable using words. You know what the redness of red is like, but you can’t actually get across to someone who’s never seen it before what that experience is like using words alone.

Private: Our experience of our own qualia is fundamentally private to us. We can’t even know if other people experience the same qualia. “How do I know the red you see and the red I see are the same?” is a question about qualia. There is no way one person can ever get direct access to another person’s qualia. Qualia are like each of us is stuck forever in our own private movie theater, never able to access what other people can see on their screens.

Directly or immediately apprehensible in consciousness: I can be mistaken about the source of red light, but I cannot be mistaken about the fact that I’m experiencing the redness of the red. I can’t directly see or hear or experience any objects actually, I can only experience the qualia they create in my mind, but that I have direct access to.

Dennett’s claim in the paper is that qualia do not actually exist, because our internal experiences actually have none of these four qualities. The reason I’m summarizing this paper specifically is that most of his points overlap with my main point about folk Cartesianism: our assessment of our own subjective, first-person experience is actually unreliable and often wrong. We don’t have 100% certain access to it. It provides useful information, but it should be taken as one data point among many. It cannot serve as some kind of magical firm foundation to build all our other beliefs off of. The information processed in our brains mostly isn’t actually rooted at all in the fact that we have qualia. If this is the case, systems without subjective first person experience can also have justified true beliefs.

So what are the issues for qualia? Dennett provides thought experiments for each property people claim they have. I’ll be half-summarizing them, and sometimes running with my own twists on them. I do strongly recommend you read the full paper.

1. Intrinsic

People “develop a taste for” coffee and beer. Most people I know who drink either remember when they took their first sip and didn’t like it, but now they love it. Did the actual taste, the direct sensation they experience in the moment of drinking beer or coffee, change over time? Or did the sensation stay the same, and only their reaction to it changed?

It seems like this should be a pretty easy question if our mental experience is “right in front of us.” If we’re watching a movie, there’s a clear difference between a character appearing on the screen before and after we’ve changed our judgment of them, versus a totally new character appearing. We can tell which one is which. But it seems like we have a lot of trouble when we stare directly at what’s going on with the sensations we experience with beer and coffee, or anything that comes with some judgment of the experience. It seems like our reaction to the sensation is somehow also part of the sensation itself. Maybe over time we’ve developed an ability to notice parts of the sensation we didn’t before. But if this is the case, it means these experiences aren’t “intrinsic.” They aren’t single, indivisible things, because they can actually be broken up into smaller individual experiences. They may even be “relational” because core qualities of them seem to depend on our judgment of them. See here for more of a breakdown on the intrinsic vs relational distinction.

We don’t have any clear way of telling whether any of our sensory experiences are actually “intrinsic,” just a bare experience that can’t be broken up into parts, or actually an assemblage of many individual experiences at once. This again chips away at our image of ourselves as having direct, easy access to our subjective, first-person experience.

The taste of coffee to a novice drinker may be a single overwhelming experience. They can’t detect any subtleties or how the taste of composed of many individual hints and flavors. Instead, they just taste a disgusting, overwhelming bitterness. Over time this taste changes… but does it change? Does their reaction to it change? There’s no way to be sure, but I lean hard in the direction that their actual taste of the coffee changes, not just their reaction to it. Suppose you didn’t like coffee, and I zapped your brain with a machine that gives you my personal reaction to the taste. You take a second sip, and suddenly you like it. It is very difficult for me to imagine you saying “Oh! I like the coffee now! It’s so good! I want to drink it all the time” while also saying “But it tastes exactly the same as it did a moment ago. See, my reaction to the taste was just changed.” I’d imagine this person would instead be stunned, and say something like “Oh it tastes very different now, the bitterness isn’t overwhelming, there are a lot of pleasant notes and hints I couldn’t detect at all before.” It seems like our judgment of the qualia is somehow itself also part of the qualia. The boundary between ourselves and the qualia we experience blurs.

A plucked guitar string

The sound of a plucked guitar string can be indescribable to an untrained ear. They only hear a single, fundamental, basic experience, ”the guitarness of the sound.” But to trained experts, a plucked string sounds like the combination of several different sounds a string can make happening at once. Are these people having different qualia, or are they reacting differently to the same qualia? Dennett himself puts this well:

Pluck the bass or low E string open, and listen carefully to the sound. Does it have describable parts or is it one and whole and ineffably guitarish? Many will opt for the latter way of talking. Now pluck the open string again and carefully bring a finger down lightly over the octave fret to create a high “harmonic”. Suddenly a new sound is heard: “purer” somehow and of course an octave higher. Some people insist that this is an entirely novel sound, while others will describe the experience by saying “the bottom fell out of the note”--leaving just the top. But then on a third open plucking one can hear, with surprising distinctness, the harmonic overtone that was isolated in the second plucking. The homogeneity and ineffability of the first experience is gone, replaced by a duality as “directly apprehensible” and clearly describable as that of any chord.

The difference in experience is striking, but the complexity apprehended on the third plucking was there all along (being responded to or discriminated). After all, it was by the complex pattern of overtones that you were able to recognize the sound as that of a guitar rather than a lute or harpsichord. In other words, although the subjective experience has changed dramatically, the pip hasn’t changed; you are still responding, as before, to a complex property so highly informative that it practically defies verbal description.

So what changes between people who can detect all the subparts of the sound of a single low guitar string, and people who can only hear the note as a single, intrinsic, undivided “guitarish” sound?

Does the guitar make different sounds based on how much you can detect? That seems unlikely. If one person can pick apart the sounds they hear much better, are they having additional sensations beyond the sound of the guitar itself, or is the guitar string just composed of these individual sensations like a checkerboard is composed of red and black squares, and the other person just can’t pick up on those internal differences? But “picking up on” the sound is itself what we’re trying to describe here: the direct, first-person experience of hearing. These experiences are specifically not supposed to be just the information we gather that we can write down; they’re supposed to be the first-person, in-the-moment undeniable experience. The fact that whether the two people experience the same or very different qualia is another hint that our sensory experiences are not simply displayed on a mental screen for us to watch like a movie.

Conclusion

We do not have any clear way of telling whether any of our qualia are actually “intrinsic” and just a bare experience that can’t be broken up into parts, or actually an assemblage of many individual experiences at once. When you first drank coffee, you had no way of telling that this taste you were experiencing was or was not an assemblage of many different other experiences at once. Just like the first-time coffee drinker, we have no way of knowing whether any one experience we have is actually intrinsic, or a combination of many different experiences at once. This again chips away at our image of ourselves as having direct, easy access to our subjective, first-person experience. People often talk as if qualia are these brute, intrinsic, foundational facts of our mental world, but it seems like we can barely tell if any one experience we have is actually composed of many others, or whether we ourselves are affecting the experience based on our judgment and reaction to it.

This all makes our experience seem very distinct from an inner movie theater. Unlike the movie theater:

It is not obvious when something is a single basic thing (like a solid color on the screen) or whether it’s composed of many other things we’ll easily notice when we get more experience with it.

Our judgment seems to directly affect our experience. We are not passively watching a screen of experiences and then judging and reacting to them after. The screen itself seems to radically change based on how we judge it.

2. Ineffable

Dennett argues that the ineffability of qualia is not due to qualia being some special, mystical property, but because they are “practically” ineffable. They are just highly specific, information-rich responses to the world that could in theory be described if we have enough experience with them. “Ineffability” is just the current horizon of our ability to analyze our own responses, not a fundamental property of the experience itself. This limit can be changed with training (like wine tasting or ear training).

The last example with coffee and the guitar string gets at this idea. Qualia might only be indescribable because we and other people are relatively untrained at detecting the communicable information they’re giving us. With training, people can learn to communicate a lot more relevant information about the sound of a guitar string or the taste of coffee. It might be that we’re mistaking our own current cognitive limitations for a fundamental property of the experience itself. Over time, experts get better and better at describing qualia.

On this point I mostly part ways with Dennett, where it does seem like some aspects of experience might permanently be walled off from public discussion. But this isn’t as relevant to the question of the Cartesian theater.

3. Private

Qualia are really really private

Qualia are fundamentally private. No one else can ever access what we experience.

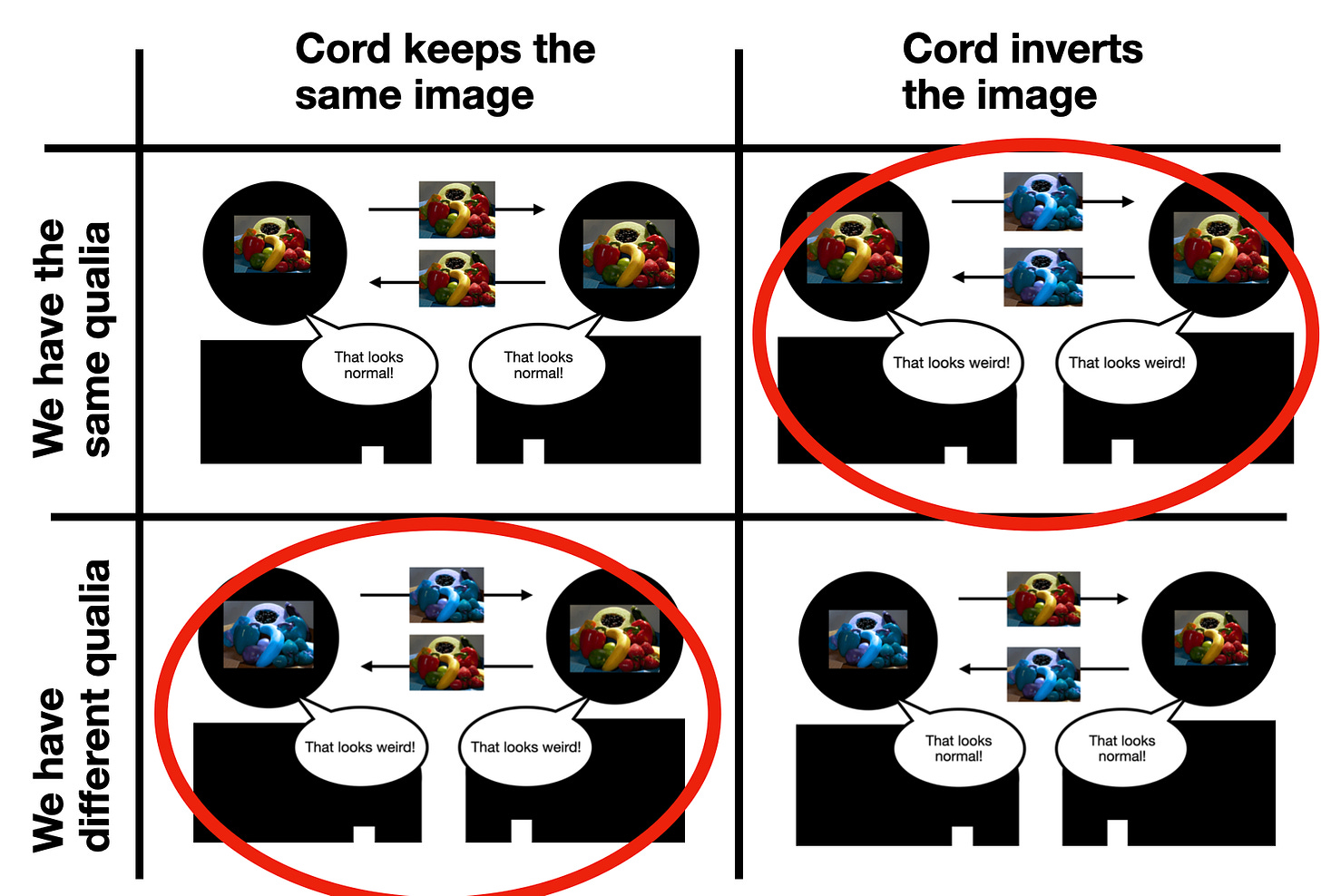

Suppose we invent a machine that connects our brains, feeding your visual input directly into mine.

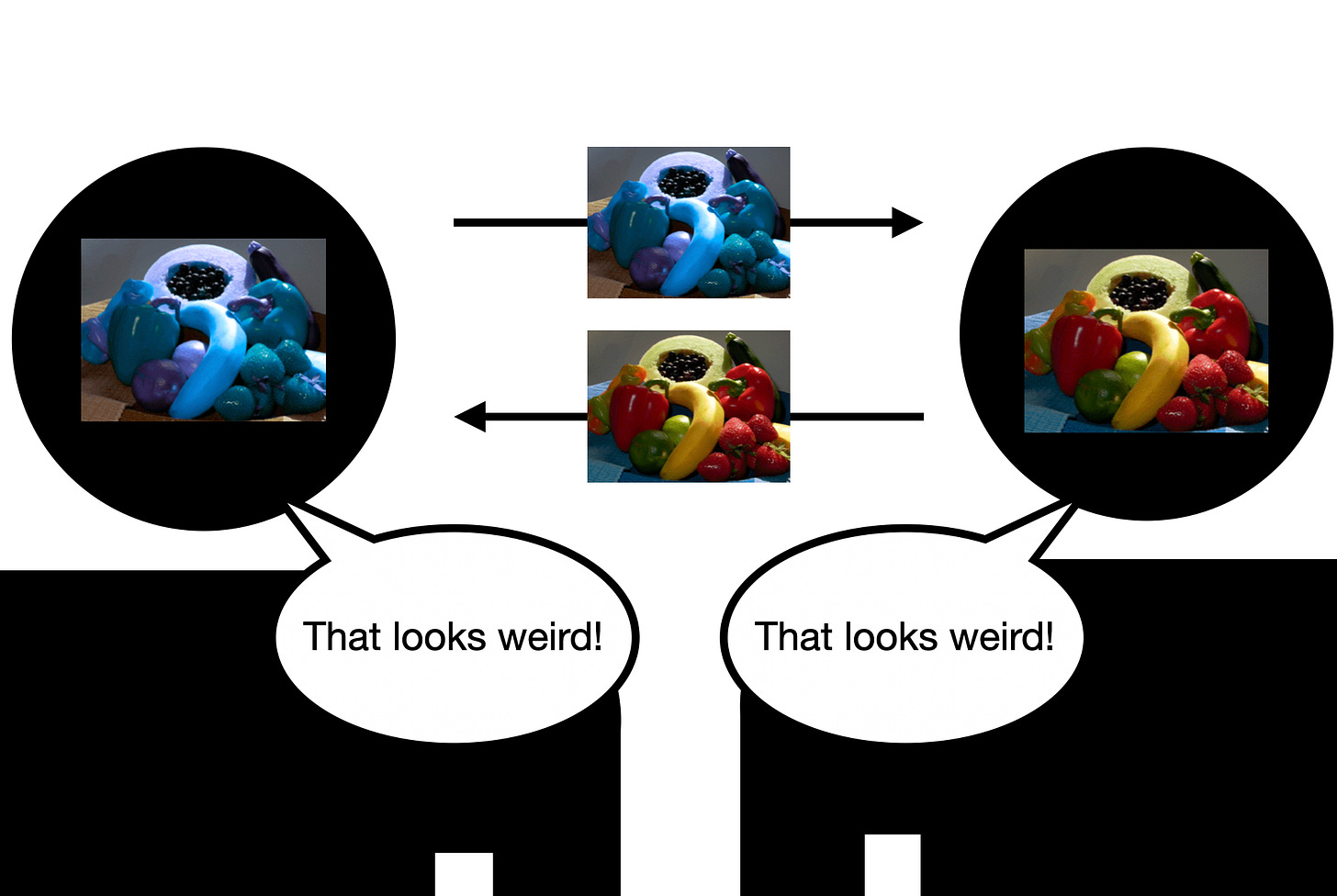

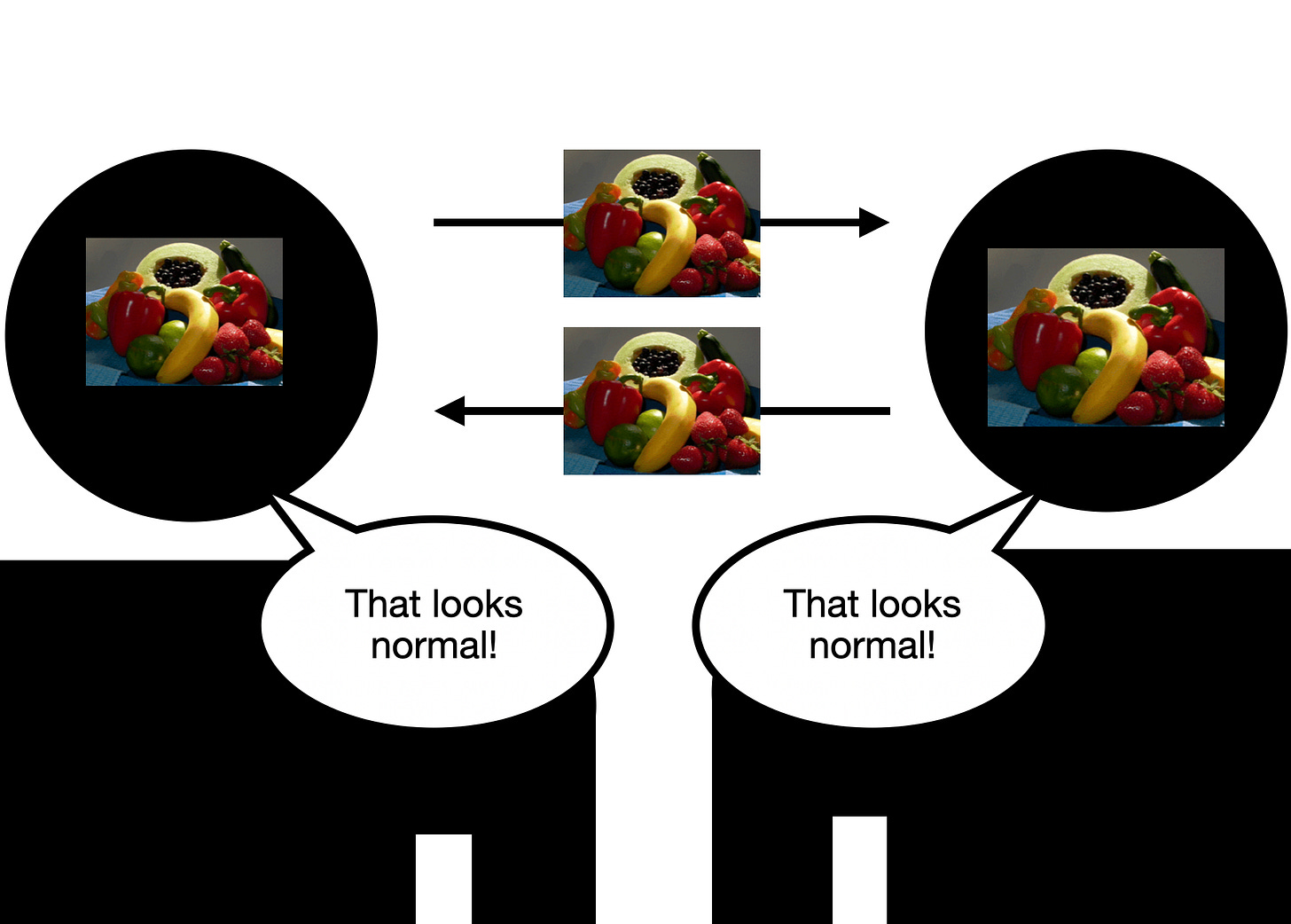

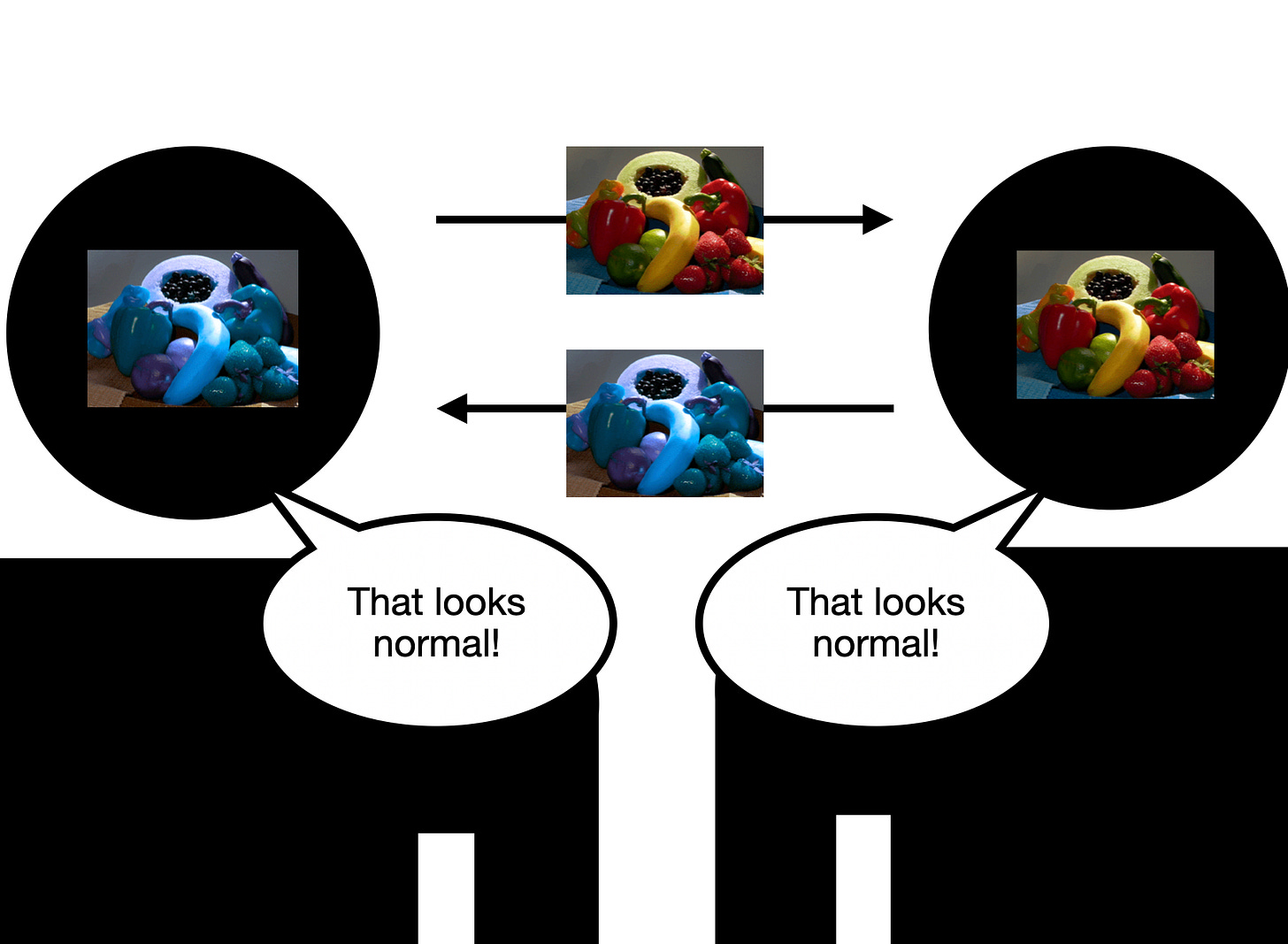

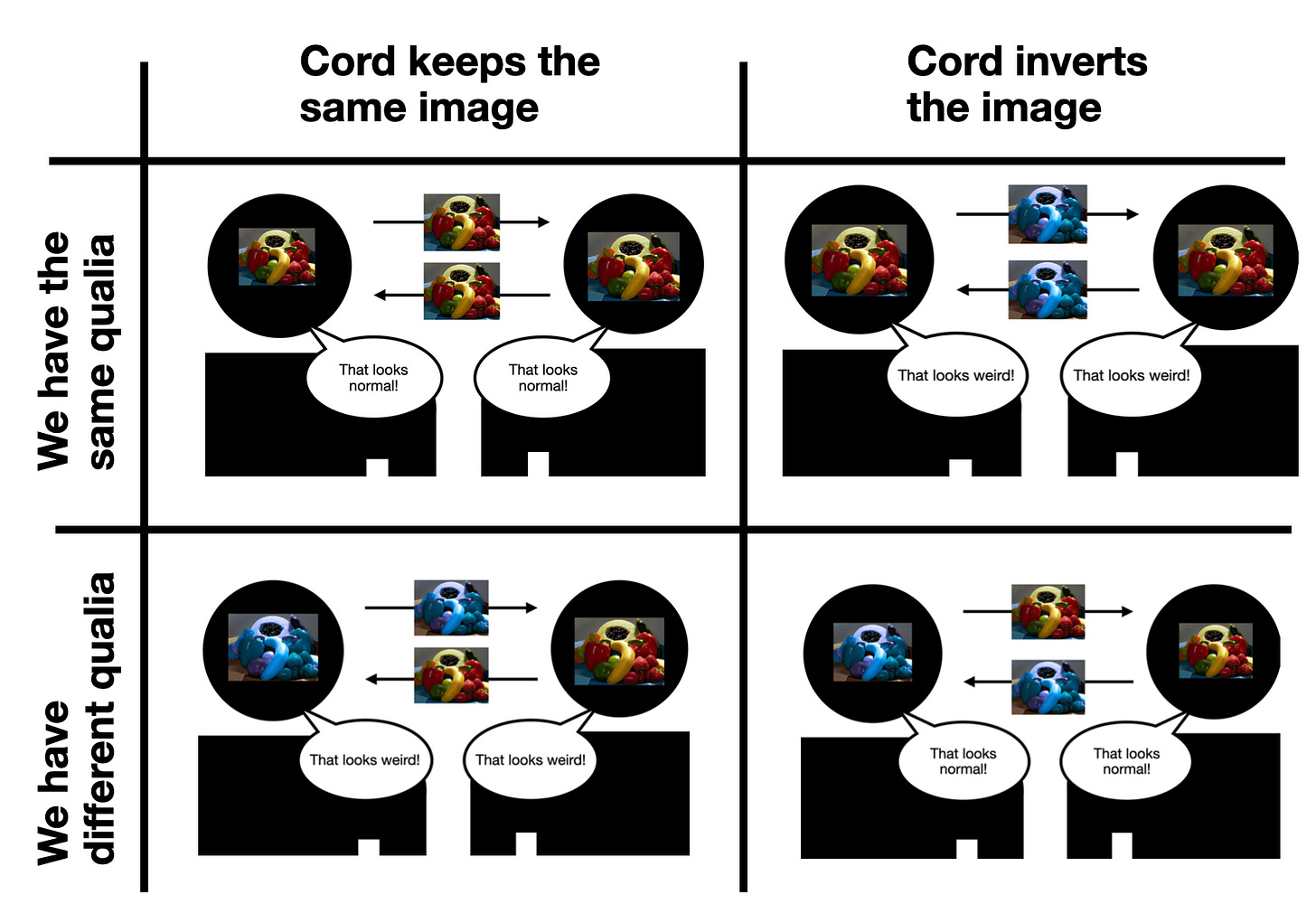

I report seeing everything you see. (This is the plot of the 1983 movie Brainstorms). I step back and gasp “Wow! Your qualia are completely different from mine! Your red is my blue! Everything smells and tastes different. This is crazy! We’re living in two different worlds. Qualia can be so different without anyone noticing.” We agree this is crazy. Suddenly, you scratch your head and say “Wait a minute, maybe we messed up somewhere. What if we plugged the wire that connected us in upside down on one end? Let’s just check to see if that changes anything.” We flip the plug upside down at one end and try it again. This time, we both agree that the other person’s experience looks totally normal.

What happened here? There are two possibilities for us: either we have different qualia, or we have the same qualia. There are also two possibilities for the cord connecting us: either it inverted the images the first time, or the second time.

Can we tell which is true based on the information we have?

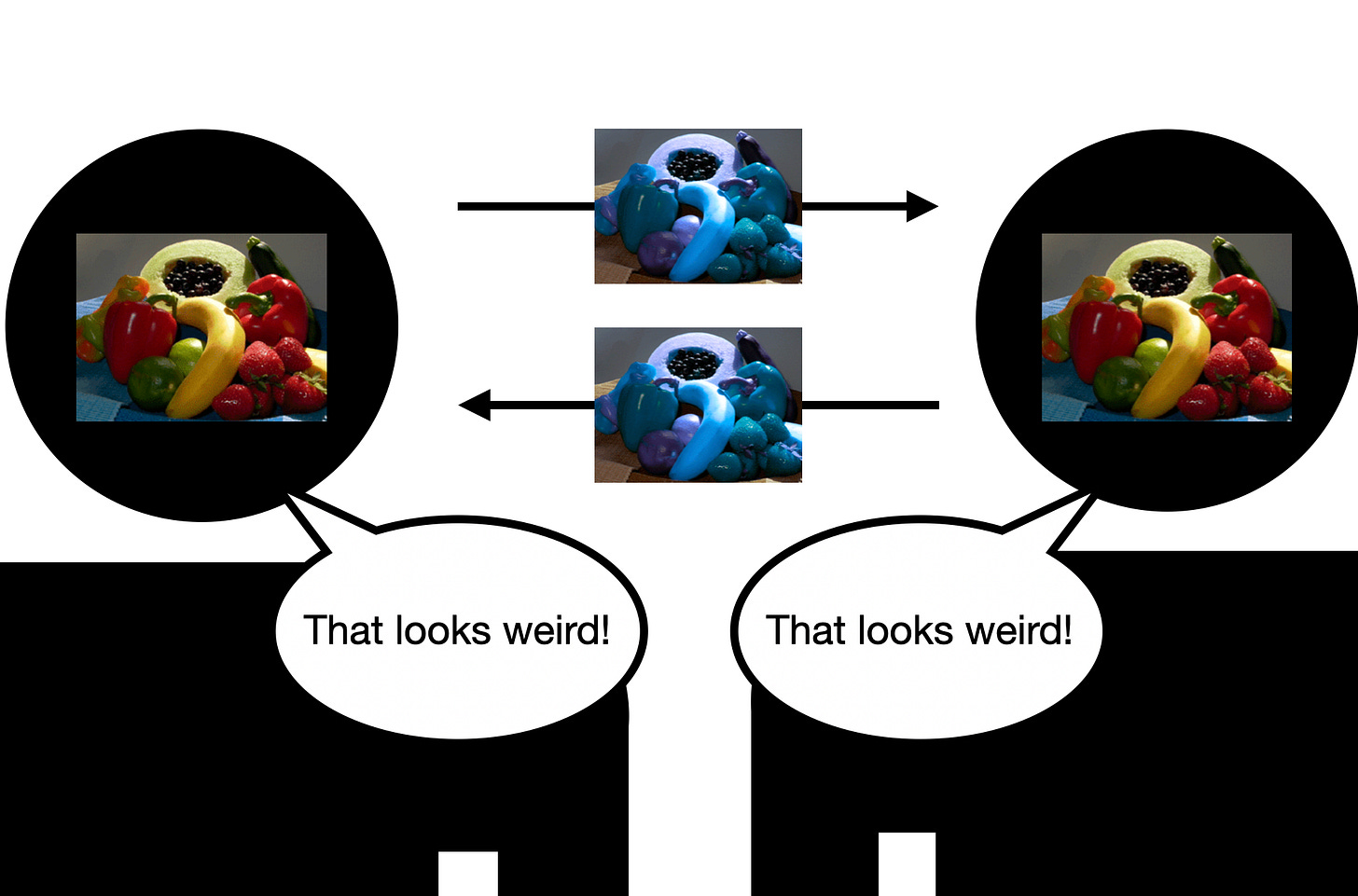

In a connection where the qualia look weird to us, there are two possibilities. One is that we both have the same qualia as each other, and it’s the connection that’s inverting it. This would cause us both to say the other person’s qualia looked weird.

Another possibility is that the connection is correct, and we do have inverted qualia. This would yield the same reaction:

The situation where we confirm that what we see is normal also has two possibilities. It could be that the connection is reporting what our qualia actually look like to the other person.

But it could also be the case that the connection is inverting our different qualia, making them look normal when they’re actually different.

Let’s organize these situations by whether we have the same or different qualia, and whether the cord inverts the image.

Now, you want to test to see if the cord is right-side-up or not. You plug two people in. As they’re watching the other person’s vision, they say “that looks weird!” This is all the information you have to go on. You can listen to them both say “Wow… your blue is my yellow. Your red is my purple” for as long as you want, but this doesn’t really add any new information, because either way you face the same problem: there are two possible situations where the participants say the other’s qualia looks weird: the cord’s inverting their similar qualia, or their different qualia are transferred faithfully by the cord.

Given the information you have, you have no way to tell whether it’s the cord that’s wrong, or if the two people have different qualia. Your evidence perfectly fits both cases. If you flip the cord, and the participants say “now it looks normal!” you have no way of deciding between these two cases.

Again, you don’t know if the cord is actually showing that the two people have the same qualia, or if it’s now upside down. You have no way of telling if the cord is right-side up or upside down. Even a machine like this can’t ever actually give you direct access to the other person’s qualia, because neither has any way to externally test if the machine is getting it right.

So qualia remain fundamentally private. We have no final way of telling whether someone else’s qualia is the same as our own.

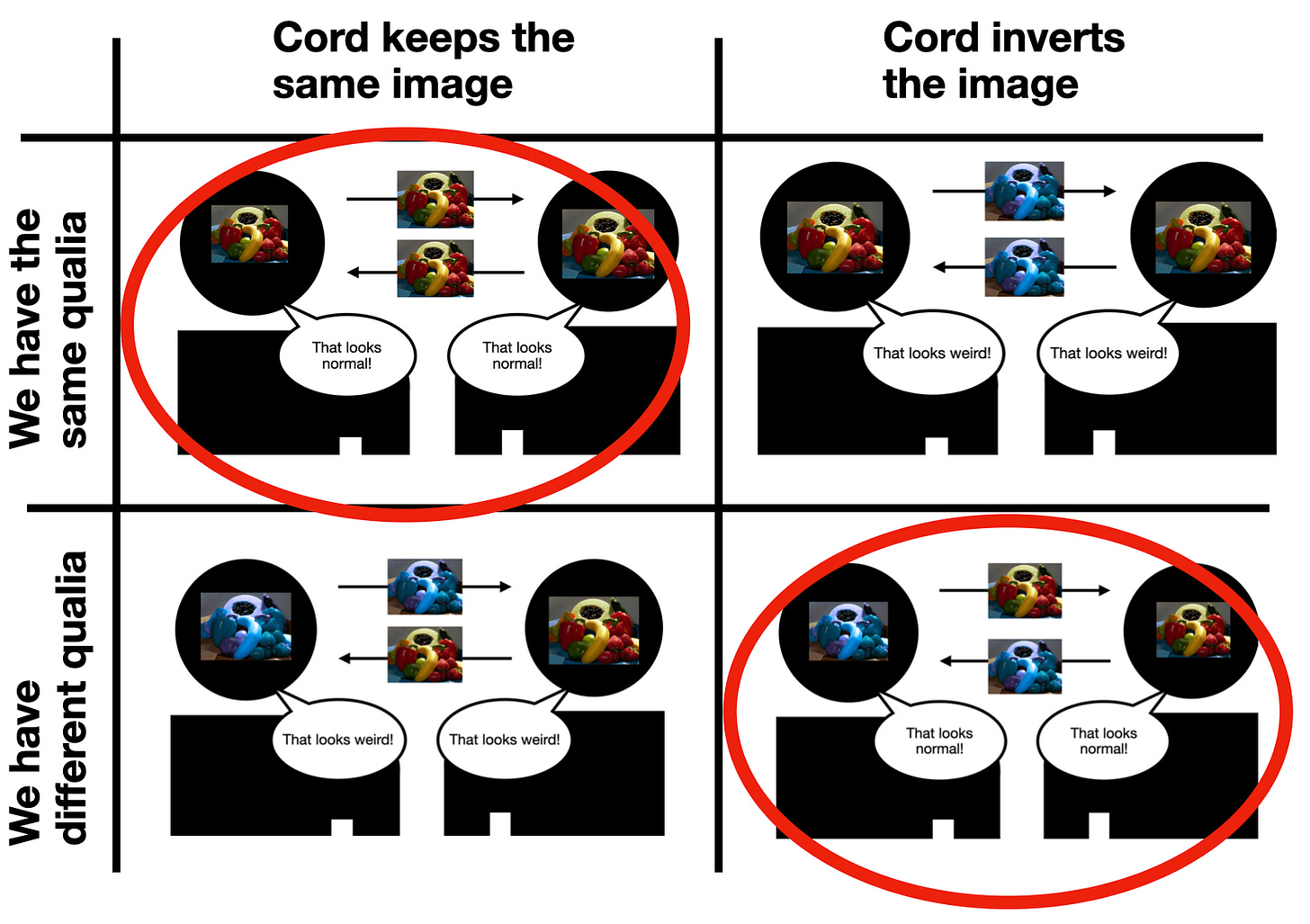

If qualia are this private, you do not have direct mental access to your own qualia

But if qualia are this completely and totally private, this also creates a problem for us. All our understanding of what qualia look “normal” come from our memories and intuitions about what the qualia looked like in the past. And we’re actually in the same relationship to our past self as the two people were in the last thought experiment.

We can imagine swapping the machine in the last example with a different machine. This time, only you get in. When you step out, all the colors in the world look inverted to you.

You’re stunned. The world is overwhelmingly alien. You ask “How did you invert my qualia?” The machine operator chuckles. “Oh, your qualia are actually the same as always. The machine uses a simple trick: We just edited your memories and crossed your memories with different colors. You now remember yellow as being blue, and blue as yellow, as an example.”

You’re very confused. “But the banana I see in front of me now is clearly blue!”

The operator says “Oh no, it looks exactly the same to you as it always did. It’s just that all your memories of yellow have been crossed with blue things. Like I bet that banana looks exactly like what you remember the sky looking like, right?”

You nod.

“Well if you go outside, you’ll see the sky’s never actually looked like that. It’s blue and has always been blue. We just swapped your memories, so now that color’s associated with what you think of as the color of bananas.”

You go outside, and the sky looks banana-yellow to you.

“No this is all clearly wrong. You did invert my qualia somehow.”

The operator scratches her head. “How would you tell the difference between an inversion of qualia and an inversion of your memories?”

“Well I just feel in my gut that this is wrong.”

“But that gut feeling is all your memories and built-up intuitions of the world.”

Hmm…

Later, a huge scientific discovery is made. Scientists have located qualia themselves, directly in the pineal gland of the brain. The operator uses this knowledge to build a second machine, this one really inverts your qualia and leaves your memories untouched. She invites you to try it out, but because the machines look identical, she realizes she mixed up which one is which. Is there any way at all for you to tell which machine is which based on the outcome of going through it? When you emerge, will you have any way of knowing at all whether it was your qualia or memories that were inverted?

In this example, memories are taking the place of the cord in the first example. When it comes to your qualia, your connection to your past self through memory is identical to your connection to the other person’s qualia through an unreliable cord. In both cases, you don’t actually have direct infallible access to the qualia.

This is a big problem, because all human thought happens in time, and the present is an infinitely small slice of time. If qualia are so private that they can only be accessed in the infinitely short present, we humans cannot actually access them directly, because we live and think in time.

Whenever your mind receives any information at all about qualia, you are reacting to a past experience, because of the fractions of a second it takes the signal from the qualia to be mentally processed. But already at this point, you’re thinking about something in the past, and you’re dependent on memory. Qualia’s privateness means memory does not give you that special access you’re supposed to have. Memory, like the cord, is fallible. You can be wrong about what you just saw. “What’s in front of your eyes” still needs fractions of a second to process. To be clear, this doesn’t mean you’re necessarily wrong about the qualia you’re experiencing, it just means you can be wrong about them. This strikes at the heart of the Cartesian intuition that we have direct, certain access to our own subjective experience.

If the qualia in the last experiment are so fundamentally private that no one else can ever access them, you yourself also have no fundamental cognitive access to your own qualia. They are as inaccessible to you as they would be to another person using the brainstorm machine to try to understand what it’s like to be you. Your brain can provide you with valid reports of what you’re experiencing, just like the other person can provide you with verbal reports about what they’re experiencing, but in both cases you don’t have that direct, magic, private access qualia are supposed to come with. In fact, here they’re behaving suspiciously similarly to a world where they don’t exist, and where your brain is only receiving information about the world that can be reported publicly, either to your future self or to another person.

Again, this seems like really bad news for the inner theater model of the brain. Not only is there no observer and the screen itself unclear and obscured by our own judgments on it, suddenly the screen’s in another room, and written reports are being sent in about what’s happening in the movie.

A relevant argument here is Derek Parfit’s long piece in Part 3 of Reasons and Persons on how the self does not persist over time, and your relationship to your past and future self is similar to your relationship to other people (summary here). This is an especially good but long read for people interested in more arguments against Cartesian egos. If qualia are so fundamentally private that no one else can access our qualia, but also we ourselves are different people moment to moment, the intersection of these views seems to imply that our own access to our past qualia (which is all qualia we can actually think about and respond to, because the present is an infinitely thin slice of time) is identical to our access to other people’s qualia: indirect and uncertain.

What to make of this? I think that this is a strong sign that qualia (whatever they are) are not fundamentally private, and might exist instead as some kind of (very complex) reportable information that can be communicated between people who can understand it. This makes much more sense to me than the idea that they are fundamentally private, because the implications of fundamental privacy make them behave completely differently than how I experience them. I want to define qualia as “the experiences that I have some direct access to.” It might be that those experiences are entirely just raw information in my brain assessing the situation. This information could in principle be communicated completely to another brain. I’d hope so, because I also think the relevant information can be communicated by memory across time to my present self. Again, this is a case where it seems like we have as much mental access to our own qualia as a computer would: an uncertain tether of memory or wires communicating information about what the world is like.

The more inaccessible you believe qualia are to machines, the more inaccessible you believe they are to your own mind as well.

4. Directly or immediately apprehensible in consciousness

If qualia are so fundamentally private, we’ve seen above that they by definition are not directly or immediately apprehensible in consciousness. We’ve also seen via the coffee and beer examples that people have a surprisingly hard time apprehending the qualia they are currently experiencing or have experienced in the past. We can’t even tell if the qualia we’re experiencing are basic and intrinsic, or composite and relational, as in the case of the guitar string. If qualia exist, we have a strange and complicated relationship with them. They are not directly apprehensible in consciousness in the way an unobscured movie screen is.

Conclusion

I think there are some clever arguments that even with these objections, qualia may still exist. But we’re way, way less sure of our own subjective experience than folk theories imply, and the nature of qualia seems more complex than simple, accessible, nonphysical pure experience.

So it seems like we don’t actually know our own internal experiences with certainty. Here I don’t mean that we might be mistaken about what objects and events we’re seeing, I mean that we can be mistaken about the nature of our current first-person subjective experience. Not only is there no one sitting in the Cartesian theater, and not only is the screen itself obscured, but there doesn’t seem to be a screen at all. This magical place, the center of the mind and thought, that so many people seem to take as so obviously given it’s crazy to doubt it, does not seem to actually exist.

If this is the case, first-person subjective experience becomes one of many possible sources of information and evidence. It’s useful, it’s just reduced to the same level as other ways we acquire information, like written descriptions, verbal accounts, and knowledge that other people we trust believe things (all of which computers can access). Most importantly, humans do not seem to have some magical, central location where experience happens that machines by definition will always lack.

The impossibility of folk introspection

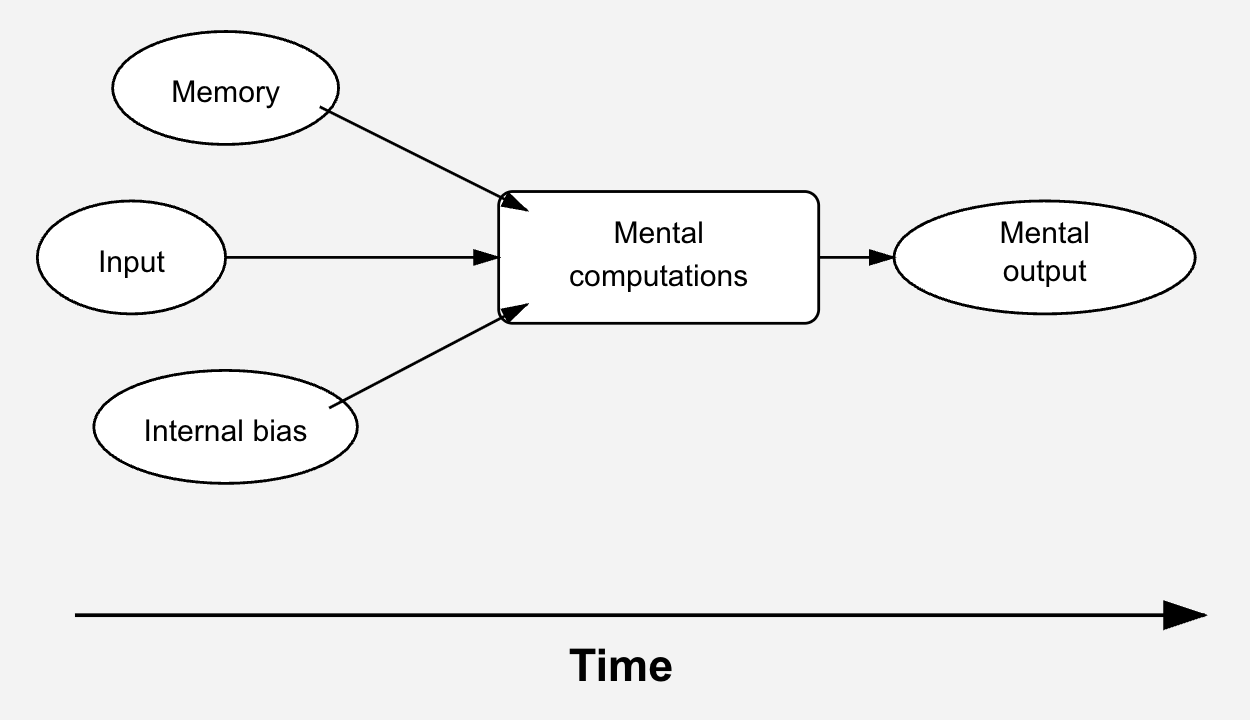

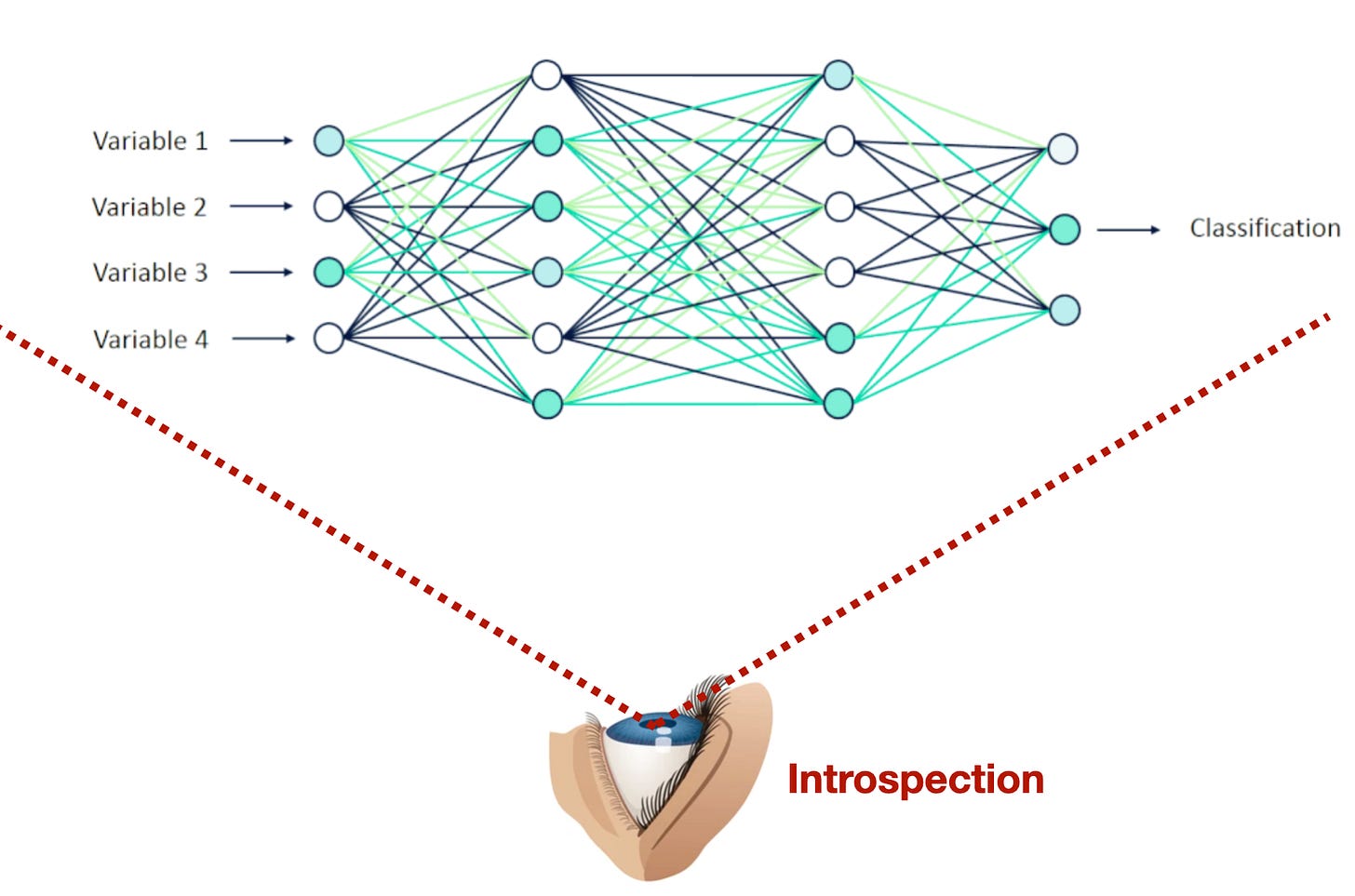

A very common critique of large language models is that they can’t “introspect” in the way humans can. They just take in input, run it forward through the net, from its input variables to its final classification, and don’t have any way of circling back on anything that’s happened. They can’t go backwards. Therefore, unlike humans, they do not have introspection. They’re pattern recognition rather than true thought. Here, the information in the neural network passes from left to right through the weights and nodes. The only way this model could have true introspection is if it were somehow able to step outside of this process and observe what had already happened:

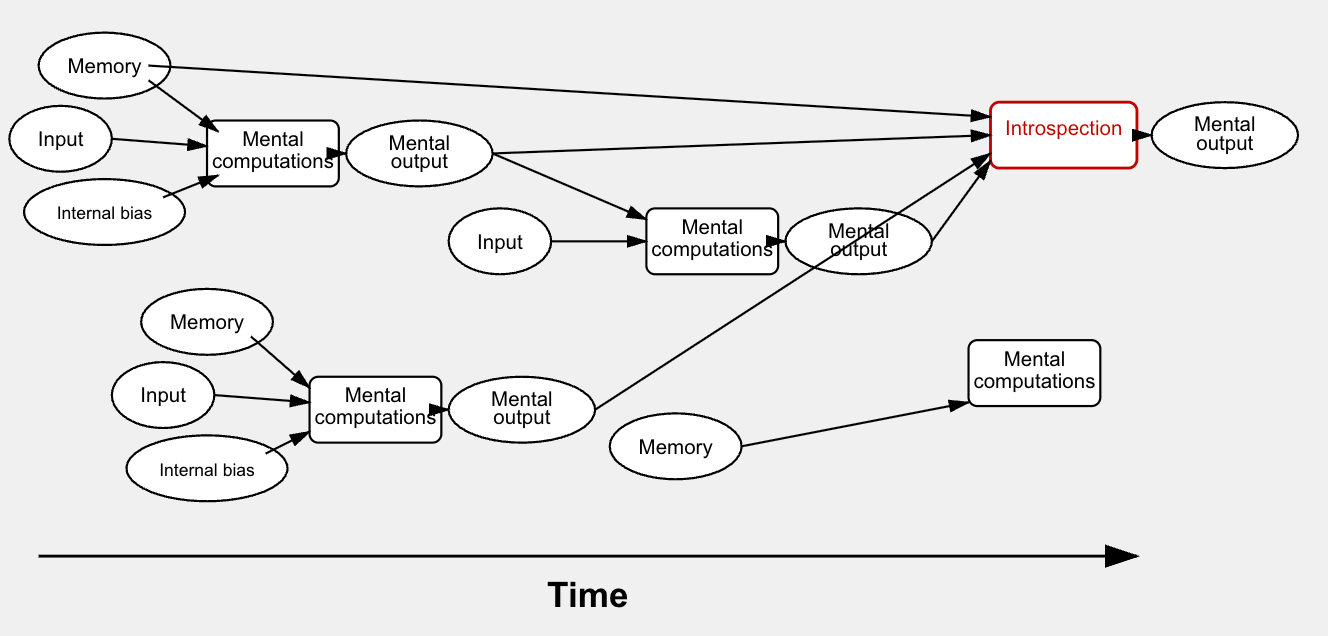

This critique always seemed implicitly Cartesian to me. Human brains are also only more or less complex information-processing units. They are composed of inputs, mental functions that perform computations, and outputs. Those outputs are themselves used later as new inputs.

Crucially, all information processing in the human brain can only happen in one direction in time: forward, because every mental subprocess in the brain is physical. When our minds take in input, they might combine that input with memories or inbuilt mental biases, run these through mental computations, and product an output.

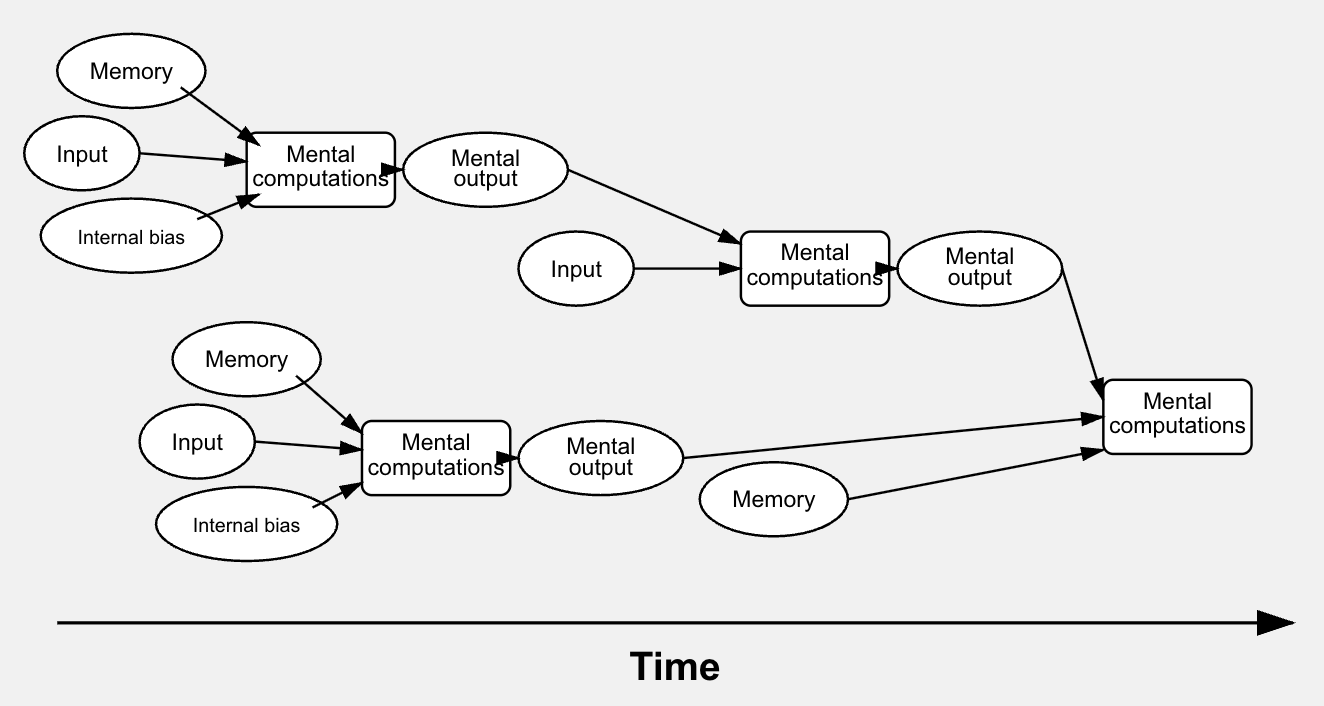

This could get more and more complex, as different parts of the brain draw on results from the mental computations of other parts:

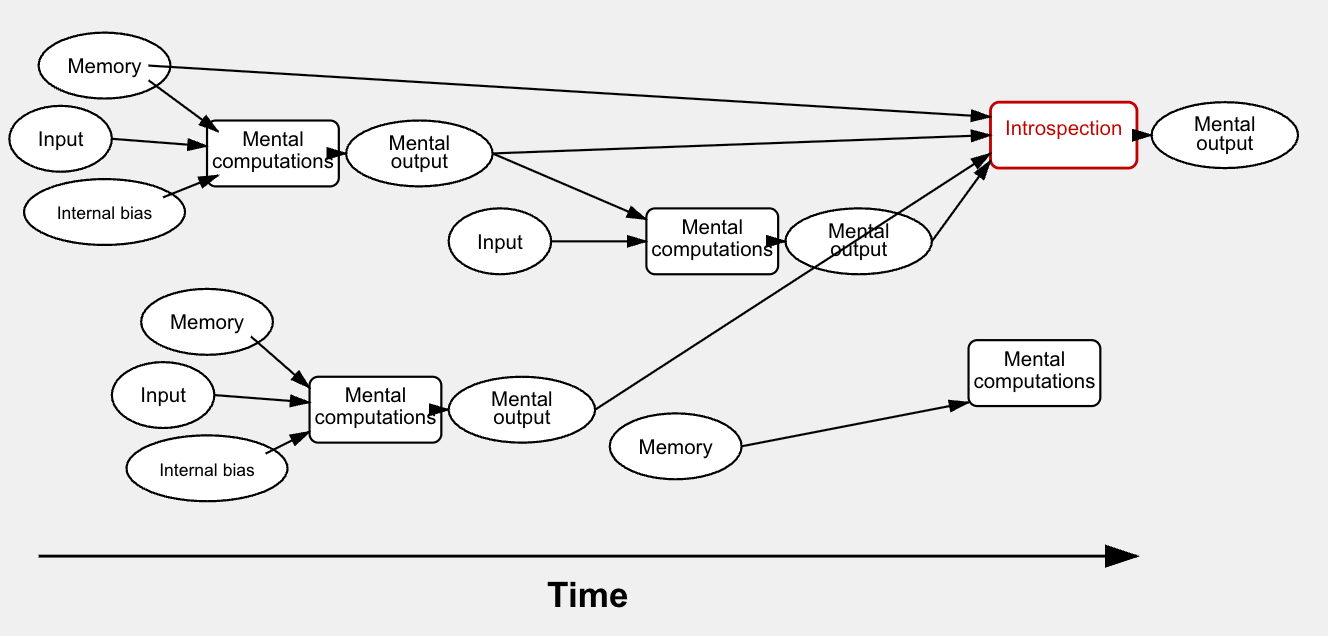

Where would introspection fit into this? Well, if introspection is another natural functional process, it has to exist in time, take in inputs, and give outputs. It seems like what we call “introspection” would be the brain calling prior inputs or results of past computations. Maybe it would look like this:

Crucially, what is NOT happening is that there is a magical outside observer looking down at the mental processes from outside. There is no Cartesian ego who can “just see directly” what’s actually happened in the mind.

“True introspection” where we step back and clearly look at our thought processes from the outside is not possible. Introspection is just another mental function that takes in inputs and gives outputs. We cannot actually see the wiring of our own minds, we can just react to the outputs our minds are giving us, in the same way we can observe someone else’s behavior over time and come to conclusions about what they are like internally. “Introspection” is actually just another informed guess about the world our brains make. Introspection is like observing a natural phenomenon and making predictions about what will happen next. It’s not like looking in a mirror and clearly seeing ourselves as we really are. Just like we can be mistaken about the real causes of other people’s behavior, we can also be mistaken about the causes of our own behavior, because the inputs our minds provide for introspection can be misleading. We can call on false or modified or selective memories with no way to tell that they’re not perfectly accurate. We can build up strong incorrect intuitions about what we’re like. There’s no special place our intuition function can turn to get the “real” story, it’s all fallible inputs just like the external world provides for anything else we’re trying to understand. I’d recommend this classic essay for a deeper dive on this.

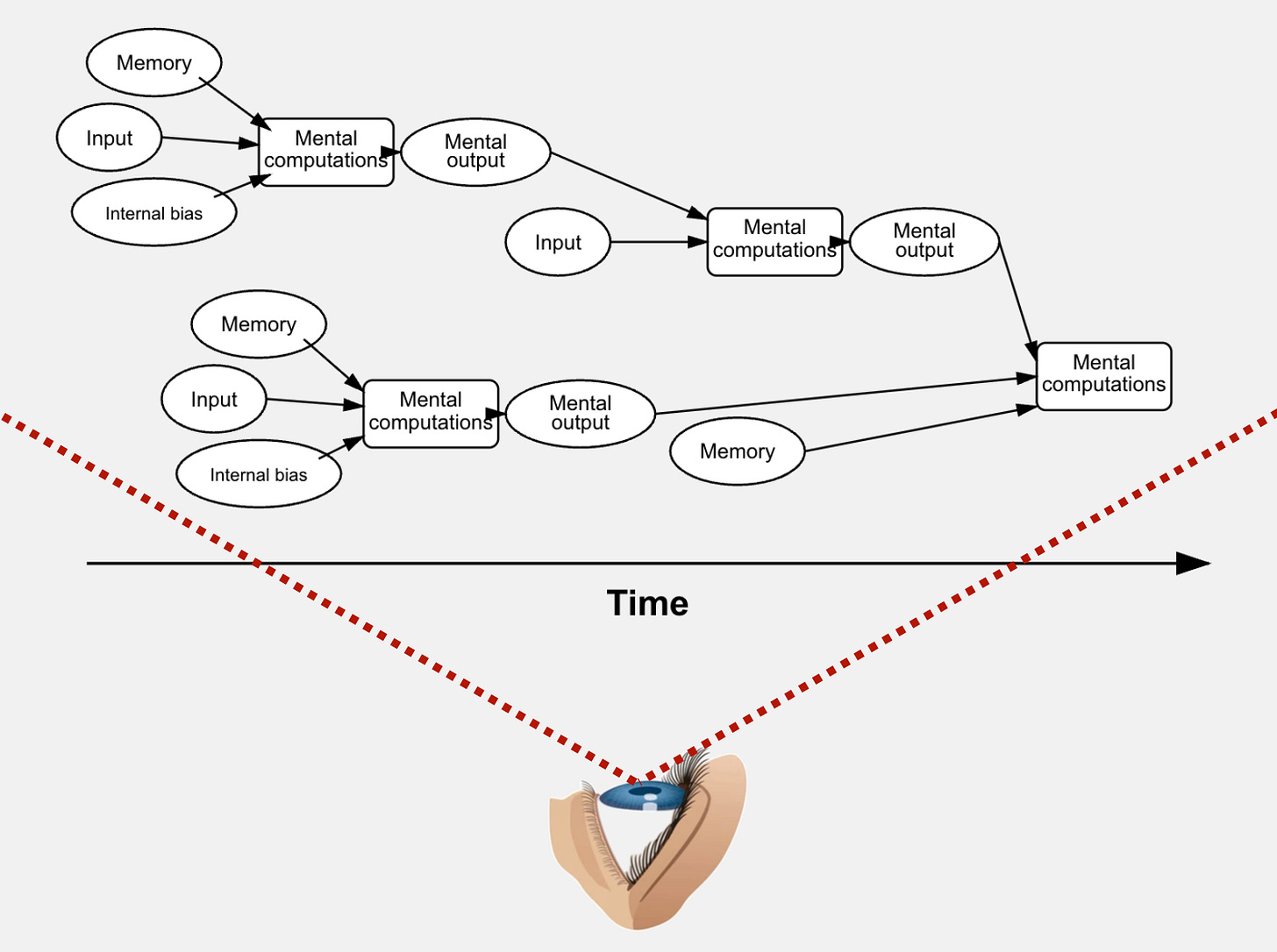

It seems like when people say AI models do not have any way of doing introspection, they think that to do that, the model needs to have some outside Cartesian ego looking in:

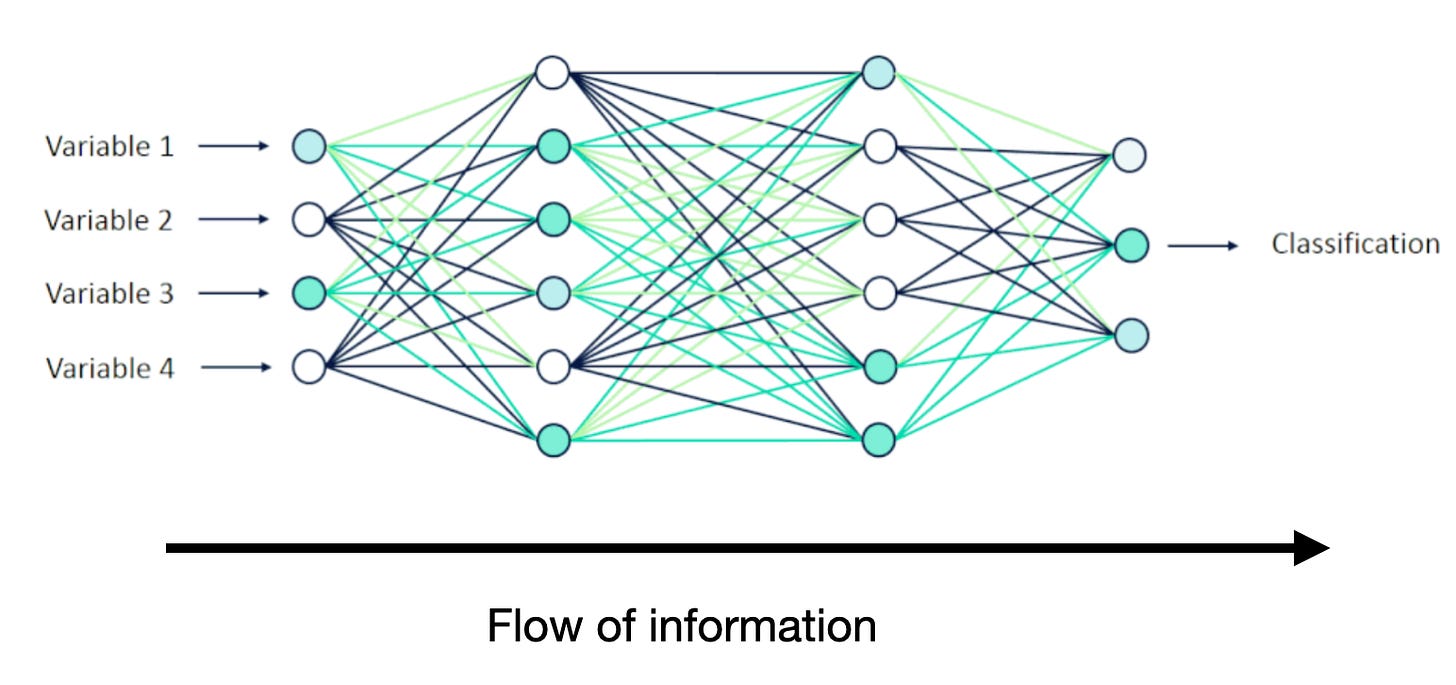

But if introspection in humans is just another mental functionalist process, where inputs are received and outputs given, rather than a magical Cartesian ego looking down from above, then it seems like anything we call introspection in a process like this:

could in principal also happen in a process that looks like this:

The fact that LLMs “only pass information along one direction” on its own tells us nothing about whether they can introspect, for the same reason that the fact that human information processing can only happen in one direction in time tells us nothing about our own ability to introspect. The only way this would invalidate the idea that LLMs can do anything like introspection is if folk Cartesianism were true.

There are obviously a lot of other ways large language models differ from the structure of human brains. But any comment on those differences shouldn’t imply that the AI companies forgot to install a Cartesian ego watching over the whole thing.

A simple rule for what introspection can’t do

The individual thoughts and experiences we have access to during introspection are not the fundamental units of thought. The fundamental units of thought are the electrical signals sent and received by neurons. They combine together into thoughts in the way binary 1’s and 0’s can combine together to form a programming language. Most of our mental processes are very complex mental functions with neurons and electrical signals as the building blocks. Because introspection is another mental function, it does not necessary have access to every minute step of its own or other functions’ structure. Computer programs can summarize the outputs and steps of other programs, but they can’t “step outside the process” and observe the binary 1 and 0 signals that make them up. In the same way, human introspection can also draw information from other mental processes, but cannot “step outside” to see the mind laid bare before it, because the mind is not actually fundamentally composed of the type of thing introspection gives us access to.

Conclusion

Our assessment of our own internal experience and thought process is fallible in the same way our assessment of the external world is. There is no “solid ground of absolute certainty” we can find in our subjective experience. This makes sense of the mind is a pile of complex functions taking in information, processing it, and providing new inferences as output. There’s no deep metaphysical difference between the mind analyzing its own contents and the mind analyzing the world, for the same reason

Whether the information the mind receives is about what’s happening inside of it, or what’s happening externally to it, doesn’t have some deep metaphysical difference.

From the outside, our situation with introspection, and the vagueness and difficulty of directly apprehending qualia, give the impression to me of a species with very strong internal built-up narratives of how our minds work colliding with the simple fact that we’re machines and not angels. We can perform mental functions on the information we receive, but we cannot enter some kind of extra-physical movie theater of consciousness, where we easily and directly apprehend our deep consistent subjective worlds, and draw conclusions based on our assessments of those worlds. In this way, we behave similarly to very general computers sent off to survive in the world.

“3. The basis of all knowledge lies in our first-person subjective experience”

We’ve already seen that:

There is no Cartesian ego observing and judging our experience.

Our first-person subjective experience is surprisingly opaque. We can be mistaken about it. It’s still evidence, but we don’t actually have 100% confident privileged access to it.

Similarly, our introspection is just another mental process that takes in fallible inputs and gives fallible outputs. The mind is not actually all easily available to us at once, like looking down at a complex domino chain falling. Anything we call “introspection” is just one part of that very complex chain itself.

Human minds take in input, run it through very complex mental functions, and give output, that they then use for other functions. In no part of this process does the mind “step above” the physical functions happening in the brain, and looks down at all the mind-stuff happening at once, and observes it like a map. Our felt sense that this happens, that we’re Cartesian observers with access to clear distinct instrinsic private mental experiences, is strong but mistaken. At best, the functions in our brains doing the introspection draw from lots of parts of the brain at once,

The folk theory of language acquisition

Many people believe we learn what words “really mean” by connecting them to specific experiences in our minds. They think we only understand “cat” because we’ve seen an actual cat and someone labeled that experience for us. Since your personal experience of seeing a cat lives privately in your mind, the “real meaning” of “cat” must be something private that only you and others who’ve had similar experiences can truly grasp.

But if word meanings are fundamentally private, language breaks down. If everyone has their own private version of what words mean, with no way to compare these meanings, how can we ever know if we’re talking about the same thing? For language to work, meanings must be publicly accessible and verifiable.

When I learn the word “cat,” its real meaning can’t depend on some private, indescribable feeling I experience, because you might experience something completely different. The meaning must be something we can communicate using other words and shared reference points. It makes much more sense to say the meaning of “cat” is something like “Small carnivorous mammals of the species Felis catus, typically weighing between 8-11 pounds, with retractable claws, whiskers, and forward-facing eyes, commonly kept as household pets and are known for hunting rodents and birds” than to say it’s something private and indescribable that people who haven’t seen one cannot possibly understand.

We only have access to public evidence about meaning. We can’t look inside each other’s minds. So any realistic theory of how we learn language must focus on what we can actually observe: how people use words in response to their environment.

We don’t learn language by linking words to mental images. Instead, we learn by linking words to publicly observable situations where they’re used. The philosopher Quine called this “stimulus meaning.” A child learns when to say “cat” by observing environmental cues and getting social feedback (nods, corrections) from the people around them.

A word’s meaning comes from its role in our entire language system, not from its connection to a single private idea. This explains how we use abstract words like “democracy” or “neutrino,” terms for which we have no clear mental picture or direct sensory experience. The old theory can’t account for these. We understand these terms not through mental images, but through their relationships to other concepts in our linguistic and social framework.

Since word meanings must depend entirely on things we can communicate about using language, and exist as essentially nodes in a web of interrelated words, they can’t depend on private, indescribable experiences. This means machines that process enough information can, in principle, access the meaning of words. They don’t need inner transcendent subjective experiences to understand language. Modern large language models are designed to situate words in broader webs of meaning and association. Once you exit the folk theory and learn about how LLMs process language via association, it becomes hard not to think that they’re successfully mimicking what humans do when the learn the meanings of words.

Can machines without qualia have knowledge?

This will be an argument that machines can in principle have knowledge. I’m not arguing that current AI systems have knowledge (though I would argue that chatbots do “know” the meaning of the words they use).

What is knowledge?

Knowledge is justified, true belief. It’s worth breaking this down a little:

Justified: I wake up in my room with the window shades down. I can’t see if it’s raining outside. I decide to flip a coin. If it’s heads, I’ll believe that it’s raining. If tails, it’s not. I flip the coin and it lands on heads, so I believe that it’s raining. I go outside and see that it actually is raining. Did I “know” it was raining before I saw it? It seems like the answer is no. I got lucky and my silly guess happened to match reality, but to “know” it was raining I needed some kind of justification for my true belief.

True: It doesn’t make sense to say I have “knowledge” that David Hume is currently President. We can only be said to have knowledge about true beliefs.

Belief: An acceptance that a statement is true or that something exists.

Anything “completely private” cannot contribute to most knowledge.

Can machines hold beliefs?

Before we can ask if machines can have beliefs, we need to get clear on what beliefs actually are. The folk Cartesian story goes something like this:

A belief is a mental state that exists in your Cartesian theater. When you believe it’s raining outside, there’s some kind of propositional content—”it is raining”—that exists in your mind, in your subjective experience. You have direct access to this belief through introspection. You can “look inward” and see what you believe. The belief causes you to grab an umbrella, but the belief itself is fundamentally a feature of your private, subjective mental life.

But we’ve already seen problems with this picture:

There is no Cartesian theater where beliefs are displayed for an inner observer

We don’t have reliable introspective access to our own mental states

Mental processes are just physical information-processing functions

So what’s left when we strip away the Cartesian story? What is a belief if it’s not a proposition floating in your mental theater?

In the physicalist-functionalist view, a belief is a certain kind of functional state. It’s a state that:

Takes in information from the world (perceptual inputs, testimony from others, memory, reasoning)

Combines with other beliefs and desires to guide decision-making

Produces behaviors that would make sense if the belief were true

Updates based on new evidence

Plays the right inferential role in reasoning (if you believe “All mammals are warm-blooded” and “whales are mammals,” you should infer “whales are warm-blooded”)

Notice that nothing in this definition requires a Cartesian theater, qualia, or privileged introspective access. A belief is defined by what it does, how it functions in the overall cognitive system, not by what it feels like “from the inside.”

You might object: “Fine, maybe machines could have states that function like beliefs. But real beliefs require understanding. When I believe it’s raining, I understand what rain is. A machine just manipulates symbols without understanding what they mean.”

But we’ve already undermined this picture in the folk theory of language acquisition above. We’ve seen that:

Meaning isn’t grounded in private, ineffable qualia.

Language acquisition works through publicly observable patterns of usage, not through attaching words to subjective experiences.

Our own understanding is ultimately cashed out in how we use words, respond to contexts, and draw inferences, not in some private mental glow of comprehension.

If understanding is functional, if it’s about having the right patterns of response, the right inferential connections, the right sensitivity to context, then there’s no reason in principle that machines couldn’t achieve it.

Can machine beliefs be justified?

The folk Cartesian picture of justification goes something like this: Your beliefs are justified when they’re properly grounded in your direct, first-person experience. You know you’re seeing red because you have direct, certain access to your own qualia. You can introspect and observe the redness right there in your mental theater. This provides a firm foundation, a bedrock of certainty, upon which all other knowledge is built.

Descartes himself made this explicit. He doubted everything he possibly could. The external world, his own body, mathematics, even whether he was dreaming. But the one thing he couldn’t doubt was his own existence as a thinking thing: “I think, therefore I am.” His subjective experience provided absolute certainty that he existed and was having thoughts.

From this foundation of subjective certainty, Descartes tried to build back up to knowledge of the external world. The idea was that our private, first-person experience provides a special kind of justification that nothing else can match.

But we’ve already seen the problems with this picture:

We don’t have direct, certain access to our own experiences

Our introspection is fallible and often misleading

The supposed bedrock of subjective certainty is actually shaky and unreliable

Our experiences aren’t clear, simple, and directly apprehensible. They’re complex, constructed, and surprisingly opaque

The Cartesian ego Descartes detected might not even exist

If the folk Cartesian story about justification is wrong, what’s left?

An alternative picture of justification is reliabilism: A belief is justified when it’s formed and maintained through reliable processes that tend to produce true beliefs. Justification isn’t about having some special subjective feeling of certainty or being able to introspectively verify your mental states. It’s about the process that produced the belief being a good one.

Consider how you actually form beliefs:

Visual belief: You look out the window and form the belief “It’s raining.”

Your eyes receive light reflected off raindrops

Your visual cortex processes this information

Pattern recognition systems identify the characteristic appearance of rain

Memory systems match this against stored representations of rain

A belief state is formed: “It’s raining”

Is any of this process fundamentally dependent on having qualia or a Cartesian theater? It doesn’t seem like it. The process is:

Receive sensory input

Process that input through various computational mechanisms

Compare against stored patterns and previous experiences

Form a representation of the world state

Store this representation for future use

This is an information-processing story all the way down. And crucially, this process is reliable: it tends to produce true beliefs about whether it’s raining. That’s what makes the belief justified.

On the reliabilist account, justified beliefs don’t need to be certain. They just need to be formed by processes that usually get things right.

This actually matches our intuitions better than the Cartesian story. Consider:

You look out the window and see what appears to be rain. But it could be:

A sprinkler system you forgot about

A movie set creating artificial rain

An elaborate hallucination (you’re on drugs, or having a stroke)

A very realistic dream

A brain-in-a-vat scenario where you’re being fed false sensory data

You can’t be certain it’s raining with the kind of absolute certainty Descartes wanted. But that’s fine. Your belief is still justified because it was formed by a reliable process. Most of the time when your visual system produces the representation “rain,” there really is rain. The process is reliable even if it’s not infallible.

What counts as a “reliable process”? Here are some features:

Correlation with truth: The process should produce true beliefs more often than false ones in the environments where it operates.

Appropriate sensitivity to evidence: The process should update beliefs when new evidence comes in, and it should be more confident when evidence is strong and less confident when evidence is weak.

Coherence: The process should produce beliefs that fit together consistently. If you believe “All mammals are warm-blooded” and “Whales are mammals,” your processes should lead you to believe “Whales are warm-blooded.”

Resistance to known error modes: The process should avoid systematic biases and known sources of error, or at least be able to correct for them.

Appropriate use of background knowledge: The process should integrate new information with relevant prior knowledge rather than treating each belief in isolation.

Could a machine implement processes with these features?

Let’s think about how a machine might form the belief “It’s raining”:

Sensor input: A camera receives visual data, or a moisture sensor detects water.

Pattern recognition: Neural networks or other algorithms process this data to identify rain patterns.

Comparison with stored data: The system compares current inputs against training data or previous observations.

Belief formation: The system forms an internal representation: “It’s raining.”

Confidence assessment: Based on signal strength and pattern matching confidence, the system assigns a probability or confidence level.

Is this process reliable? Well, that depends on the specifics:

How good are the sensors?

How well-trained is the pattern recognition system?

Does the system have enough relevant training data?

Can it distinguish rain from sprinklers or other confounds?

Does it update appropriately when conditions change?

These are all empirical questions. There’s no in-principle reason why a well-designed system couldn’t form beliefs through highly reliable processes. In fact, for some tasks, machine processes might be more reliable than human ones.

Under reliabilism, it looks like machines can in fact have justified, true beliefs. Therefore, it seems like machines can have knowledge.

The implication that humans might be incredibly complex biological machines rather than transcendent experiencing angels should hopefully be a little more understandable than when we started.

Conclusion

So I claim folk Cartesianism is not “obviously correct,” and a better story of the mind looks something like this:

Humans do not have Cartesian egos, magical eyes floating outside of physical reality observing what happens in our minds like a movie.

Human experience is deeply fallible as a source of information about our own minds, and even of the experience itself. We do not actually have a clear understanding of what’s happening in our own minds, or even a clear understanding of what we are currently experiencing at any given moment. “What it is like to be us” is not something our brains have special, ultimate access to. We have very strong evidence, but our minds are not displayed before us clearly like a movie.

“True introspection” where we step back and look at our thought processes is not possible. Introspection is just another mental function that takes in inputs and gives outputs. We cannot actually see the wiring of our own minds. “Introspection” is actually just another informed guess about the world our brains make, this time based on the patterns we’ve observed in our own behavior. Introspection is like observing a natural phenomenon and making predictions about what will happen next. It’s not like looking in a mirror and clearly seeing ourselves as we really are.

Human knowledge is based on publicly observable evidence and testable reasoning, not privileged access to private mental contents. Our beliefs are justified through the same kinds of information processing that machines can perform, drawing inferences from data, testing predictions, and refining models based on outcomes.

The meaning of language is rooted in public use and social context, not private mental associations. We learn words through observable patterns of usage, not by linking them to ineffable subjective experiences. This means machines can acquire genuine understanding of language without needing a Cartesian theater.

There is no fundamental barrier preventing machines from having beliefs, knowledge, or understanding. If our minds are physical information-processing systems (as physicalism suggests) and what matters is function rather than material (as functionalism suggests), then appropriately designed machines could replicate any cognitive capacity humans have.

I’ll close with a famous line from Derek Parfit on the reductionist view of personal identity, where he describes the experience of letting go of his sense of having a Cartesian ego and internal movie theater.

On the Reductionist view each person’s existence just involves the exercise of a brain and body, the doing of certain deeds, the thinking of certain thoughts, the occurrence of certain experiences, and so on…

Is the truth depressing? Some may find it so. But I find it liberating, and consoling. When I believed that my existence was such a further fact, I seemed imprisoned in myself. My life seemed like a glass tunnel, through which I was moving faster every year, and at the end of which there was darkness. When I changed my view, the walls of my glass tunnel disappeared. I now live in the open air. There is still a difference between my life and the lives of other people. But the difference is less. Other people are closer. I am less concerned about the rest of my own life, and more concerned about the lives of others.